scientific misconduct

Harad Matthes, the boss of the anthroposophical Krankenhaus Havelhoehe and professor for Integrative and Anthroposophical Medicine at the Charite in Berlin, has featured on my blog before (see here and here). Now he is making headlines again.

‘Die Zeit‘ reported that Matthes went on German TV to claim that the rate of severe adverse effects of COVID-19 vaccinations is about 40 times higher than the official figures indicate. In the MDR broadcast ‘Umschau’ Matthes said that his unpublished data show a rate of 0,8% of severe adverse effects. In an interview, he later confirmed this notion. Yet, the official figures in Germany indicate that the rate is 0,02%.

How can this be?

Die ZEIT ONLINE did some research and found that Matthes’ data are based on extremely shoddy science and mistakes. The Carite also distanced themselves from Matthes’ evaluation: “The investigation is an open survey and not really a scientific study. The data are not suitable for drawing definitive conclusions regarding incidence figures in the population that can be generalized” The problems with Matthes’ ‘study’ seem to be sevenfold:

- The data are not published and can thus not be scrutinized.

- Matthes’ definition of a severe adverse effect is not in keeping with the generally accepted definition.

- Matthes did not verify the adverse effects but relied on the information volunteered by people over the Internet.

- Matthes’ survey is based on an online questionnaire accessible to anyone. Thus it is wide open to selection bias.

- The sample size of the survey is around 10 000 which is far too small for generalizable conclusions.

- There is no control group which makes it impossible to differentiate a meaningful signal from mere background noise.

- The data contradict those from numerous other studies that were considerably more rigorous.

Despite these obvious flaws Matthes insisted in a conversation with ZEIT ONLINE that the German official incidence figures are incorrect. As Germany already has its fair share of anti-vaxxers, Matthes’ unfounded and irresponsible claims contribute significantly to the public sentiments against COVID vaccinations. They thus endangering public health.

In my view, such behavior amounts to serious professional misconduct. I, therefore, feel that his professional body, the Aerztekammer, should look into it and prevent further harm.

The Lancet is a top medical journal, no doubt. But even such journals can make mistakes, even big ones, as the Wakefield story illustrates. But sometimes, the mistakes are seemingly minor and so well hidden that the casual reader is unlikely to find them. Such mistakes can nevertheless be equally pernicious, as they might propagate untruths or misunderstandings that have far-reaching consequences.

A recent Lancet paper might be an example of this phenomenon. It is entitled “Management of common clinical problems experienced by survivors of cancer“, unquestionably an important subject. Its abstract reads as follows:

_______________________

Improvements in early detection and treatment have led to a growing prevalence of survivors of cancer worldwide.

Models of care fail to address adequately the breadth of physical, psychosocial, and supportive care needs of those who survive cancer. In this Series paper, we summarise the evidence around the management of common clinical problems experienced by survivors of adult cancers and how to cover these issues in a consultation. Reviewing the patient’s history of cancer and treatments highlights potential long-term or late effects to consider, and recommended surveillance for recurrence. Physical consequences of specific treatments to identify include cardiac dysfunction, metabolic syndrome, lymphoedema, peripheral neuropathy, and osteoporosis. Immunotherapies can cause specific immune-related effects most commonly in the gastrointestinal tract, endocrine system, skin, and liver. Pain should be screened for and requires assessment of potential causes and non-pharmacological and pharmacological approaches to management. Common psychosocial issues, for which there are effective psychological therapies, include fear of recurrence, fatigue, altered sleep and cognition, and effects on sex and intimacy, finances, and employment. Review of lifestyle factors including smoking, obesity, and alcohol is necessary to reduce the risk of recurrence and second cancers. Exercise can improve quality of life and might improve cancer survival; it can also contribute to the management of fatigue, pain, metabolic syndrome, osteoporosis, and cognitive impairment. Using a supportive care screening tool, such as the Distress Thermometer, can identify specific areas of concern and help prioritise areas to cover in a consultation.

_____________________________

You can see nothing wrong? Me neither! We need to dig deeper into the paper to find what concerns me.

In the actual article, the authors state that “there is good evidence of benefit for … acupuncture …”[1]; the same message was conveyed in one of the tables. In support of these categorical statements, the authors quote the current Cochrane review entitled “Acupuncture for cancer pain in adults”. Its abstract reads as follows:

Background: Forty per cent of individuals with early or intermediate stage cancer and 90% with advanced cancer have moderate to severe pain and up to 70% of patients with cancer pain do not receive adequate pain relief. It has been claimed that acupuncture has a role in management of cancer pain and guidelines exist for treatment of cancer pain with acupuncture. This is an updated version of a Cochrane Review published in Issue 1, 2011, on acupuncture for cancer pain in adults.

Objectives: To evaluate efficacy of acupuncture for relief of cancer-related pain in adults.

Search methods: For this update CENTRAL, MEDLINE, EMBASE, PsycINFO, AMED, and SPORTDiscus were searched up to July 2015 including non-English language papers.

Selection criteria: Randomised controlled trials (RCTs) that evaluated any type of invasive acupuncture for pain directly related to cancer in adults aged 18 years or over.

Data collection and analysis: We planned to pool data to provide an overall measure of effect and to calculate the number needed to treat to benefit, but this was not possible due to heterogeneity. Two review authors (CP, OT) independently extracted data adding it to data extraction sheets. Data sheets were compared and discussed with a third review author (MJ) who acted as arbiter. Data analysis was conducted by CP, OT and MJ.

Main results: We included five RCTs (285 participants). Three studies were included in the original review and two more in the update. The authors of the included studies reported benefits of acupuncture in managing pancreatic cancer pain; no difference between real and sham electroacupuncture for pain associated with ovarian cancer; benefits of acupuncture over conventional medication for late stage unspecified cancer; benefits for auricular (ear) acupuncture over placebo for chronic neuropathic pain related to cancer; and no differences between conventional analgesia and acupuncture within the first 10 days of treatment for stomach carcinoma. All studies had a high risk of bias from inadequate sample size and a low risk of bias associated with random sequence generation. Only three studies had low risk of bias associated with incomplete outcome data, while two studies had low risk of bias associated with allocation concealment and one study had low risk of bias associated with inadequate blinding. The heterogeneity of methodologies, cancer populations and techniques used in the included studies precluded pooling of data and therefore meta-analysis was not carried out. A subgroup analysis on acupuncture for cancer-induced bone pain was not conducted because none of the studies made any reference to bone pain. Studies either reported that there were no adverse events as a result of treatment, or did not report adverse events at all.

Authors’ conclusions: There is insufficient evidence to judge whether acupuncture is effective in treating cancer pain in adults.

This conclusion is undoubtedly in stark contrast to the categorical statement of the Lancet authors: “there is good evidence of benefit for … acupuncture …“

What should be done to prevent people from getting misled in this way?

- The Lancet should correct the error. It might be tempting to do this by simply exchanging the term ‘good’ with ‘some’. However, this would still be misleading, as there is some evidence for almost any type of bogus therapy.

- Authors, reviewers, and editors should do their job properly and check the original sources of their quotes.

PS

In case someone argued that the Cochrane review is just one of many, here is the conclusion of an overview of 15 systematic reviews on the subject: The … findings emphasized that acupuncture and related therapies alone did not have clinically significant effects at cancer-related pain reduction as compared with analgesic administration alone.

Yes, Today is ‘WORLD SLEEP DAY‘ and you are probably in bed hoping this post will put you back to sleep.

This study aimed to synthesise the best available evidence on the safety and efficacy of using moxibustion and/or acupuncture to manage cancer-related insomnia (CRI).

The PRISMA framework guided the review. Nine databases were searched from its inception to July 2020, published in English or Chinese. Randomised clinical trials (RCTs) of moxibustion and or acupuncture for the treatment of CRI were selected for inclusion. The methodological quality was assessed using the method suggested by the Cochrane collaboration. The Cochrane Review Manager was used to conduct a meta-analysis.

Fourteen RCTs met the eligibility criteria; 7 came from China. Twelve RCTs used the Pittsburgh Sleep Quality Index (PSQI) score as continuous data and a meta-analysis showed positive effects of moxibustion and or acupuncture (n = 997, mean difference (MD) = -1.84, 95% confidence interval (CI) = -2.75 to -0.94, p < 0.01). Five RCTs using continuous data and a meta-analysis in these studies also showed significant difference between two groups (n = 358, risk ratio (RR) = 0.45, 95% CI = 0.26-0.80, I 2 = 39%).

The authors concluded that the meta-analyses demonstrated that moxibustion and or acupuncture showed a positive effect in managing CRI. Such modalities could be considered an add-on option in the current CRI management regimen.

Even at the risk of endangering your sleep, I disagree with this conclusion. Here are some of my reasons:

- Chinese acupuncture trials invariably are positive which means they are as reliable as a 4£ note.

- Most trials were of poor methodological quality.

- Only one made an attempt to control for placebo effects.

- Many followed the A+B versus B design which invariably produces (false-) positive results.

- Only 4 out of 14 studies mentioned adverse events which means that 10 violated research ethics.

Sorry to have disturbed your sleep!

Bioresonance is an alternative therapeutic and diagnostic method employing a device developed in Germany by Scientology member Franz Morell in 1977. The bioresonance machine was further developed and marketed by Morell’s son-in-law Erich Rasche and is also known as ‘MORA’ therapy (MOrell + RAsche). Bioresonance is based on the notion that one can diagnose and treat illness with electromagnetic waves and that, via resonance, such waves can influence disease on a cellular level.

On this blog, we have discussed the idiocy bioresonance several times (for instance, here and here). My favorite study of bioresonance is the one where German investigators showed that the device cannot even differentiate between living and non-living materials. Despite the lack of plausibility and proof of efficacy, research into bioresonance continues.

The aim of this study was to evaluate if bioresonance therapy can offer quantifiable results in patients with recurrent major depressive disorder and with mild, moderate, or severe depressive episodes.

The study included 140 patients suffering from depression, divided into three groups.

- The first group (40 patients) received solely bioresonance therapy.

- The second group (40 patients) received pharmacological treatment with antidepressants combined with bioresonance therapy.

- The third group (60 patients) received solely pharmacological treatment with antidepressants.

The assessment of depression was made using the Hamilton Depression Rating Scale, with 17 items, at the beginning of the bioresonance treatment and the end of the five weeks of treatment.

The results showed a statistically significant difference for the treatment methods applied to the analyzed groups (p=0.0001). The authors also found that the therapy accelerates the healing process in patients with depressive disorders. Improvement was observed for the analyzed groups, with a decrease of the mean values between the initial and final phase of the level of depression, of delta for Hamilton score of 3.1, 3.8 and 2.3, respectively.

The authors concluded that the bioresonance therapy could be useful in the treatment of recurrent major depressive disorder with moderate depressive episodes independently or as a complementary therapy to antidepressants.

One could almost think that this is a reasonably sound study. But why did it generate such a surprising result?

When reading the full paper, the first thing one notices is that it is poorly presented and badly written. Thus there is much confusion and little clarity. The questions keep coming until one comes across this unexpected remark: the study was a retrospective study…

This explains some of the confusion and it certainly explains the surprising results. It remains unclear how the patients were selected/recruited but it is obvious that the groups were not comparable in several ways. It also becomes very clear that with the methodology used, one can make any nonsense look effective.

In the end, I am left with the impression that mutton is being presented as lamb, even worse: I think someone here is misleading us by trying to convince us that an utterly bogus therapy is effective. In my view, this study is as clear an example of scientific misconduct as I have seen for a long time.

The purpose of this recent investigation was to evaluate the association between chiropractic utilization and use of prescription opioids among older adults with spinal pain … at least this is what the abstract says. The actual paper tells us something a little different: The objective of this investigation was to evaluate the impact of chiropractic utilization upon the use of prescription opioids among Medicare beneficiaries aged 65 plus. That sounds to me much more like trying to find a CAUSAL relationship than an association.

Anyway, the authors conducted a retrospective observational study in which they examined a nationally representative multi-year sample of Medicare claims data, 2012–2016. The study sample included 55,949 Medicare beneficiaries diagnosed with spinal pain, of whom 9,356 were recipients of chiropractic care and 46,593 were non-recipients. They measured the adjusted risk of filling a prescription for an opioid analgesic for up to 365 days following the diagnosis of spinal pain. Using Cox proportional hazards modeling and inverse weighted propensity scoring to account for selection bias, they compared recipients of both primary care and chiropractic to recipients of primary care alone regarding the risk of filling a prescription.

The adjusted risk of filling an opioid prescription within 365 days of initial visit was 56% lower among recipients of chiropractic care as compared to non-recipients (hazard ratio 0.44; 95% confidence interval 0.40–0.49).

The authors concluded that, among older Medicare beneficiaries with spinal pain, use of chiropractic care is associated with significantly lower risk of filling an opioid prescription.

The way this conclusion is formulated is well in accordance with the data. However, throughout the paper, the authors imply that chiropractic care is the cause of fewer opioid prescriptions. For instance: The observed advantage of early chiropractic care mirrors the results of a prior study on a population of adults aged 18–84. The suggestion is that chiropractic saves patients from taking opioids.

It does not need a lot of fantasy to guess why some people might want to create this impression. I am sure that chiropractors would be delighted if the US public felt that their manipulations were the solution to the opioid crisis. For many months, they have been trying hard enough to pretend this is true. Yet, I know of no convincing data to demonstrate it.

The new investigation thus turns out to be a lamentable piece of pseudo research. Retrospective case-control studies can obviously not establish cause and effect, particularly if they do not even account for the severity of the symptoms or the outcomes of the treatment.

Three days ago, I reported a new study of homeopathy. At the time, I had not seen the full paper. Now, thanks to a kind reader sending it to me, I can report more details.

To recap:

In this double-blind, cluster-randomized, placebo-controlled, four parallel arms, community-based, clinical trial, a 20,000-person sample of the population residing in Ward Number 57 of the Tangra area, Kolkata, was randomized in a 1:1:1:1 ratio of clusters to receive one of three homeopathic medicines:

- Bryonia alba 30cH,

- Gelsemium sempervirens 30cH,

- Phosphorus 30cH,

- or an identical-looking placebo.

The treatment period lasted for 3 (children) or 6 (adults) days. All the participants, who were aged 5 to 75 years, received ascorbic acid (vitamin C) tablets of 500 mg, once per day for 6 days. In addition, instructions on a healthy diet and general hygienic measures, including handwashing, social distancing, and proper use of facemasks and gloves, were given to all the participants.

No new confirmed COVID-19 cases were diagnosed in the target population during the follow-up timeframe of 1 month-December 20, 2020 to January 19, 2021-thus making the trial inconclusive.

The Phosphorus group had the least exposure to COVID-19 compared with the other groups. In comparison with placebo, the occurrence of unconfirmed COVID-19 cases was significantly less in the Phosphorus group (week 1: odds ratio [OR], 0.1; 95% confidence interval [CI], 0.06 to 0.16; week 2: OR, 0.004; 95% CI, 0.0002 to 0.06; week 3: OR, 0.007; 95% CI, 0.0004 to 0.11; week 4: OR, 0.009; 95% CI, 0.0006 to 0.14), but not in the Bryonia or Gelsemium groups.

The authors concluded that the trial was inconclusive. The possible effect exerted by Phosphorus necessitates further investigation.

When I first blogged about this, I commented with this question: If you conduct a COVID prevention trial, would you not make sure that rigorous testing for COVID of all participants is implemented? Having seen the full paper, The question remains unanswered. Here is all that the authors write about the outcome measures:

(a) Primary outcome—Occurrence of newly diagnosed (confirmed by detection of the SARS-CoV-2 RNA in nasopharyngeal swab by real-time reverse transcription polymerase chain reaction (RT-PCR) or rapid antigen test) COVID-19 infections as per Government of India records.

(b) Secondary outcome—Occurrence of unconfirmed COVID19 cases as assessed clinically during home visits. It was defined as abrupt onset (within the last 10 days) of fever (100.4°F or 38°C body temperature) with two or more of the following: loss of taste or smell, dry cough, shortness of breath, sore throat, congestion or runny nose, headache, malaise, fatigue, myalgia, limb or joint pain, chest pain or pressure, conjunctivitis, diarrhea, nausea or vomiting, skin rashes, discoloration of fingers or toes.

The timeline was up to 30 days after completing the recommended dosage or once the person reported COVID-19 positive, whichever was earlier. Data were collected weekly by teams of homeopaths from home visits and/or via telephone, whenever required.

I am not entirely sure what this means but I think “as per Government of India records” indicates that they did not bother to systematically measure the primary endpoint of their study. Instead, they relied on the data from occasional unsystematic testing. My suspicion is further confirmed by the authors’ statement in their discussion section: “a manual search of the Government records during the trial phase could not identify a single confirmed COVID-19 positive case belonging to the study population … Enhanced numbers of testing could have changed the outcome of the trial“.

If my suspicion is true, the study is a joke – and not a good one at that. It would mean that considerable funds and efforts have been wasted. It would also mean that the conclusion drawn by the authors “the trial was inconclusive” is inaccurate. It was not inconclusive but it was fatally flawed from its outset.

A few weeks ago, I blogged about a pilot study of homeopathy to prevent COVID infections. Now a similar trial has been published – also in the journal ‘HOMEOPATHY’.

In this double-blind, cluster-randomized, placebo-controlled, four parallel arms, community-based, clinical trial, a 20,000-person sample of the population residing in Ward Number 57 of the Tangra area, Kolkata, was randomized in a 1:1:1:1 ratio of clusters to receive one of three homeopathic medicines:

- Bryonia alba 30cH,

- Gelsemium sempervirens 30cH,

- Phosphorus 30cH,

- or an identical-looking placebo.

The treatment period lasted for 3 (children) or 6 (adults) days. All the participants, who were aged 5 to 75 years, received ascorbic acid (vitamin C) tablets of 500 mg, once per day for 6 days. In addition, instructions on a healthy diet and general hygienic measures, including handwashing, social distancing, and proper use of facemasks and gloves, were given to all the participants.

No new confirmed COVID-19 cases were diagnosed in the target population during the follow-up timeframe of 1 month-December 20, 2020 to January 19, 2021-thus making the trial inconclusive.

The Phosphorus group had the least exposure to COVID-19 compared with the other groups. In comparison with placebo, the occurrence of unconfirmed COVID-19 cases was significantly less in the Phosphorus group (week 1: odds ratio [OR], 0.1; 95% confidence interval [CI], 0.06 to 0.16; week 2: OR, 0.004; 95% CI, 0.0002 to 0.06; week 3: OR, 0.007; 95% CI, 0.0004 to 0.11; week 4: OR, 0.009; 95% CI, 0.0006 to 0.14), but not in the Bryonia or Gelsemium groups.

The authors concluded that the trial was inconclusive. The possible effect exerted by Phosphorus necessitates further investigation.

How can this be?

If you conduct a COVID prevention trial, would you not make sure that rigorous testing for COVID of all participants is implemented?

Unfortunately, I cannot access the full article – if someone can, please send it to me. From reading just the abstract I cannot help feeling that there is something very wrong here. And from looking at the list of authors’ affiliations I am not convinced that the authors are all that objective about the potential of homeopathy:

- Department of Community Medicine, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 2Department of Organon of Medicine and Homoeopathic Philosophy, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 3Department of Pathology & Microbiology, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 4Department of Forensic Medicine & Toxicology, DN.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 5Department of Materia Medica, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 6Department of Repertory, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 7Department of Practice of Medicine, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 8Department of Surgery, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 9Department of Homoeopathic Pharmacy, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 10Department of Physiology, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 11Department of Anatomy, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

- 12Department of Obstetrics & Gynecology, D.N.De Homoeopathic Medical College and Hospital, Govt. of West Bengal, Tangra, Kolkata, West Bengal, India.

Yes, there is a new paper on homeopathic Arnica!

And yes, it arrives at a positive conclusion.

How is this possible?

Let’s have a look.

The authors conducted a systematic review and metaanalysis, following a predefined protocol, of all studies on the use of homeopathic Arnica montana in surgery. They included all randomized and nonrandomized studies comparing homeopathic Arnica to a placebo or to another active comparator and calculated two quantitative meta-analyses and appropriate sensitivity analyses.

Twenty-three publications reported on 29 different comparisons. One study had to be excluded because no data could be extracted, leaving 28 comparisons. Eighteen comparisons used placebo controls, nine comparisons an active control, and in one case Arnica was compared to no treatment. The metaanalysis of the placebo-controlled trials yielded an overall effect size of Hedge’s g = 0.18 (95% confidence interval -0.007/0.373; p = 0.059). Active comparator trials yielded a highly heterogeneous significant effect size of g = 0.26. This is mainly due to the large effect size of non-randomized studies, which converges against zero in the randomized trials.

The authors concluded that homeopathic Arnica has a small effect size over and against placebo in preventing excessive hematoma and other sequelae of surgeries. The effect is comparable to that of anti-inflammatory substances.

This review has many remarkable (or should I say, suspect?) features, e.g.:

- Its authors are famous (or should I say, infamous) advocates of homeopathy not known for their objectivity (including Prof Walach).

- Some of the trials included in the analysis are unpublished conference proceedings usually only published as an abstract (ref 29).

- Others were published in journals such as ‘Allgemeine Homoeopathische Zeitung‘ which is unlikely to manage a decent peer-review system (ref 46).

- Some trials used Arnica in low potencies that contained active molecules, and nobody doubts that active molecules can have effects (ref 32 and 37).

- One study seems to be a retrospective case-control study (ref 38).

- The primary endpoints of several studies were not those evaluated in the review (e.g. ref 42).

- One study used a combination of herbal and homeopathic arnica in the verum group which means the observed effect cannot be attributed to homeopathy (ref 31).

Perhaps the strangest feature relates to the methodology used by the review authors: “Where data were only available in graphs, data were read off the graph by enlarging the display and reading the figures with a ruler.” I have never before come across this method which must be wide open to bias.

Considering all of these odd features, I think that the small effect size over and against placebo in preventing excessive hematoma and other sequelae of surgeries reported by the review authors is most likely due to a range of factors that have nothing whatsoever to do with homeopathy.

So, does the new review show that homeopathic Arnica is “efficacious”? I don’t think so!

This study assessed the effectiveness of Oscillococcinum in the protection from upper respiratory tract infections (URTIs) in patients with COPD who had been vaccinated against influenza infection over the 2018-2019 winter season.

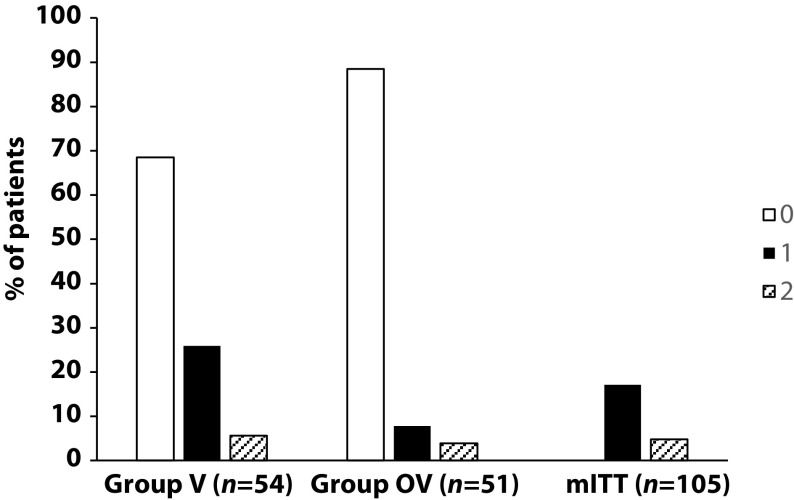

A total of 106 patients were randomized into two groups:

- group V received influenza vaccination only

- group OV received influenza vaccination plus Oscillococcinum® (one oral dose per week from inclusion in the study until the end of follow-up, with a maximum of 6 months follow-up over the winter season).

The primary endpoint was the incidence rate of URTIs (number of URTIs/1000 patient-treatment exposure days) during follow-up compared between the two groups.

There was no significant difference in any of the demographic characteristics, baseline COPD, or clinical data between the two treatment groups (OV and V). The URTI incidence rate was significantly higher in group V than in group OV (2.9 versus 1.2 episodes/1000 treatment days, difference OV-V = -1.7; p=0.0312). There was a significant delay in occurrence of an URTI episode in the OV group versus the V group (mean ± standard error: 48.7 ± 3.0 versus 67.0 ± 2.8 days, respectively; p=0.0158). Limitations to this study include its small population size and the self-recording by patients of the number and duration of URTIs and exacerbations.

The authors concluded that the use of Oscillococcinum in patients with COPD led to a significant decrease in incidence and a delay in the appearance of URTI symptoms during the influenza-exposure period. The results of this study confirm the impact of this homeopathic medication on URTIs in patients with COPD.

Primary endpoint, comparison of the number of upper respiratory tract infections in the two treatment groups during follow-up

This prospective, randomized, single-center study was funded by Laboratoires Boiron, was conducted in the Pneumology Department of Charles Nicolle Hospital, Tunis, and was written up by a commercial firm specializing in writing for the pharmaceutical industry. The latter point may explain why it reads well and elegantly glosses over the many flaws of the trial.

If I did not know better, I might suspect that the study was designed to deceive us (Boiron would, of course, never do this!): The primary endpoint was the incidence rate of URTIs (number of URTIs/1000 patient-treatment exposure days) in the two groups during the follow-up period. This rate is calculated as the number of episodes of URTIs per 1000 days of follow-up/treatment exposure. The rates were then compared between the OV and V groups. The following symptoms were considered indicative of an URTI: fever, shivering, runny or blocked nose, sneezing, muscular aches/pain, sore throat, watery eyes, headaches, nausea/vomiting, diarrhoea, fatigue and loss of appetite.

This means that there was no verification whatsoever of the primary endpoint. In itself, this flaw would perhaps not be so bad. But put it together with the fact that patients were not blinded (there were no placebos!), it certainly is fatal.

In essence, the study shows that patients who perceive to receive treatment will also perceive to have fewer URTIs.

SURPRISE, SURPRISE!

‘Survive Cancer’ is a UK charity that promotes and researches orthomolecular medicine in the treatment of cancer, septic shock, mental health, and other illnesses. They claim to provide information about research and trials and a multi-pronged treatment approach for sufferers of cancer. Specifically for cancer, they recommend the following ‘top ten‘ so-called alternative medicines (SCAMs):

- Gerson

- Vitamin C therapy

- Anti-angiogenic therapy

- Immunotherapy

- Photodynamic-/Photo-therapy

- Melatonin

- Bisphosphonates (for bone cancer)

- Coley’s toxins

- Salvestrols

- Pain management

Interesting?

Yes, because it is misleading to the extreme. Here, for example, is what they say about an old favorite of mine (and of Prince Charles):

Gerson Therapy

Max Gerson was a German doctor who in the early twentieth century devised an anti-cancer diet and regime based on radically altering the sodium/potassium ratio in the body for the better, thus allowing optimal cellular functions, and nutrition, coupled with intensive detoxification through the use of coffee enemas.

Coffee enemas (see Detox, in First Steps, 5 Rs of Cancer Survival,) are a scientifically established, and medically accepted, way of stimulating the production of glutathione-s-transferases, a major liver detoxifying enzyme family. The diet is vegetarian, low in protein, with fresh organic fruit and vegetable juices daily, and certain specified supplements, such as potassium, niacin and vitamin C. At the end of his life Gerson testified before Congress with the details of 50 cases he had cured. His daughter, Charlotte, has continued Gerson’s work in the U.S. However, she has not made an attempt to integrate modern nutritional state-of-the-art knowledge into the therapy. This is being done by Gar Hildebrand. A retrospective study showed that the Gerson therapy is much more effective than chemotherapy for ovarian cancer and melanoma, both particularly aggressive forms of cancer. Gerson himself had notable successes with various kinds of brain tumour, even after some neurological damage had occurred. Orthomolecular Oncology suggests combining Immunopower with Gerson as an update. We can also cite a remarkable case of a 11 year remission in Multiple Myeloma, another fast-moving, relentless cancer without conventional cure, otherwise conventionally untreated, achieved through a combination of Gerson and modern orthomolecular approaches. Gerson is a powerful, comprehensive therapy, still capable of producing cures, even in its unmodulated form. However, it requires great discipline, time, and extra assistance. Read Gerson’s book and/or contact the Gerson Institute for further details.

One does not need to be a genius to predict that cancer patients following this sort of advice, will significantly shorten their lives, diminish their quality of life and empty their bank account. One does, however, need to be a genius to predict when the UK charity commission is finally going to do something about the many UK charities that prey on vulnerable cancer patients.

PS

I almost forgot: the patrons of this charity are:

- HRH Princess Michael of Kent

- The Earl Baldwin of Bewdley

(Co-Chairman of the Parliamentary Committee for Alternative and Complementary Medicine) - Dr Damien Downing, MBBS, (Editor of The Journal of Nutritional and Environmental Medicine)

- Mr Peter J Gravett, MB, MRCS, FRCPath.

- Dr P J Kingsley, MB, BS, MRCS, LRCP, FAAEM, DA, D.Obst. RCOG