causation

It has been reported that a well-known conservative activist, Kelly Canon, from Arlington, Texas, USA, has tragically died. She was famous for peddling COVID-19 vaccine misinformation. The complications caused by the virus—just a few weeks after attending a “symposium” against the vaccines – have killed her.

“Another tragedy and loss for our Republican family. Our dear friend Kelly Canon lost her battle with pneumonia today. Kelly will be forever in our hearts as a loyal and beloved friend and Patriot. Gone way too soon We will keep her family in our prayers,” the Arlington Republican Club said in a statement.

Her death was said to be “from COVID-related pneumonia.” Canon had announced on Facebook in November that her employer had granted her a religious exemption for the COVID-19 vaccine. “No jabby-jabby for me! Praise GOD!” she wrote at the time.

Canon had been an outspoken critic of COVID-19 vaccine mandates and pandemic-related restrictions. In one of her final Facebook posts, Canon shared several links to speeches she attended at a “COVID symposium” in Burleson in early December devoted to dissuading people from getting the COVID-19 vaccines that are currently available. The event was organized by God Save Our Children, which bills itself as “a conservative group that is fighting against the use of experimental vaccines on our children.”

Canon had shared similar content on Twitter, where her most recent post was a YouTube video featuring claims that the coronavirus pandemic was “planned” in advance and part of a global conspiracy.

As news of her death spread Tuesday, pro-vaccine commentators flooded her Facebook page with cruel comments and mocking memes, while her supporters unironically praised her for being a “warrior for liberty” to the very end.

___________________________

A religious exemption?

What for heaven’s sake is that?

I feel sad for every death caused by COVID and its complications. If the death is caused by ignorance, it renders the sadness all the more profound.

Yesterday, it has been reported that Indian scientists found the mode of action of homeopathic remedies. This is the newspaper article:

And this seems to be the abstract of the actual paper:

Homeopathic medicines contain ultra-low concentrations of metal and compounds, and it is challenging to classify homeopathic potencies using modern characterization tools. This work presents a novel experimental tool for classifying various homeopathic medicines under a low-frequency generated electromagnetic (EM) fields. A custom-built primary coil is used for generating EM fields at different excitation frequencies. The potentized test samples were prepared at decimal dilution scale of Ferrum with α‑lactose monohydrate and exhibited significant and distinct induced EM responses in the second sensing coil. The measured responses decrease logarithmically due to reducing Ferrum concentration. The resolution improved in higher potencies from 0.03 µV at 300 Hz to 0.24 µV at 4.8 kHz. Different compounds of homeopathic medicines were also investigated to produce distinct induced EM characteristics. These results were correlated with Raman spectroscopy, impedance analyser, and FT-IR analysis. The experimental investigation confirmed the classification of potencies and the technique developed to detect ultra-low metallic concentrations.

I might be a bit slow on the uptake – but I don’t see how this investigation proves anything. Perhaps someone can explain it to me?

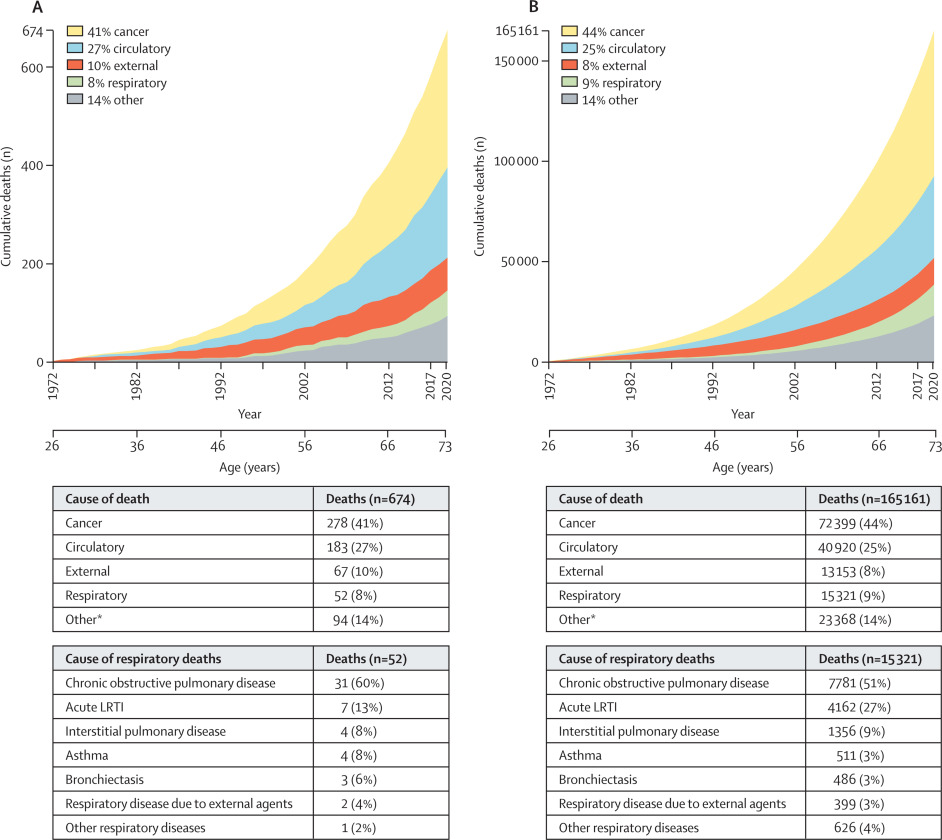

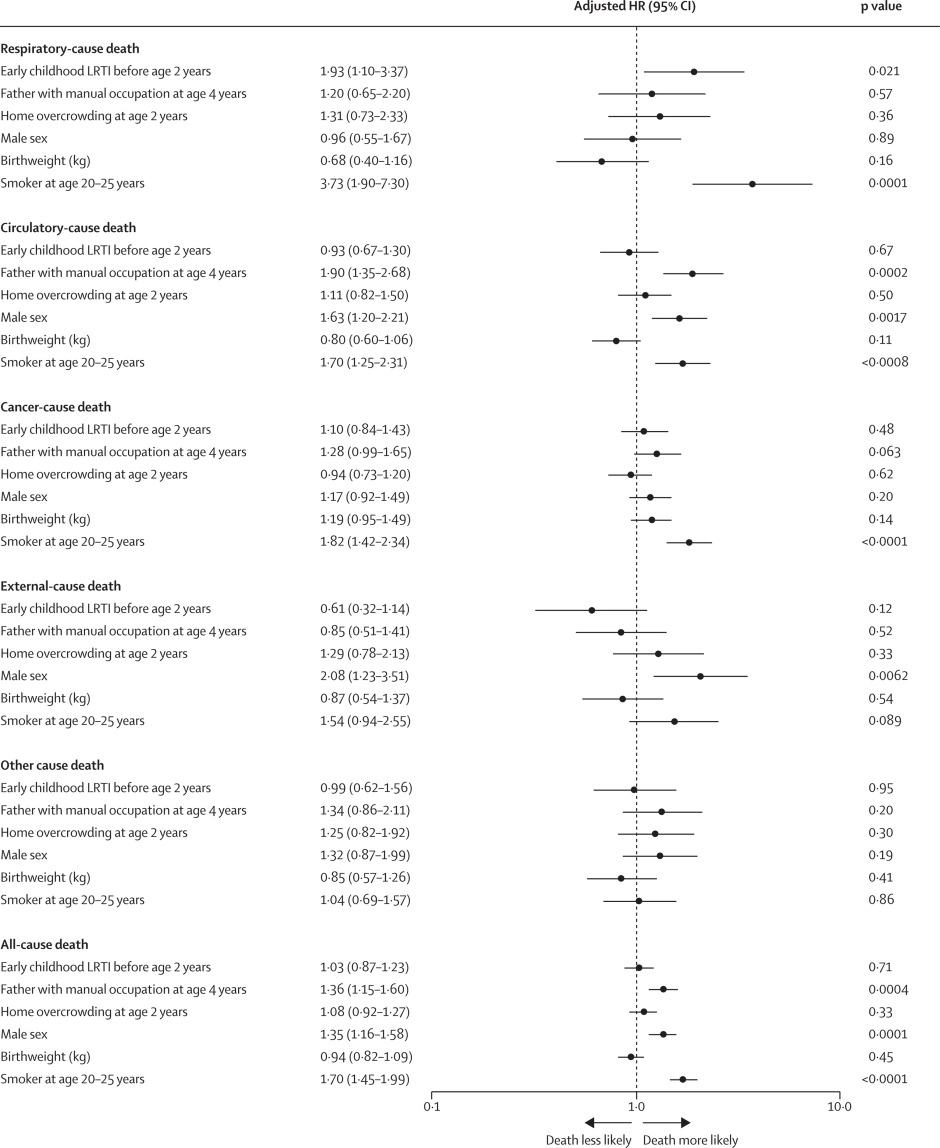

Lower respiratory tract infections (LRTIs) in early childhood are known to influence lung development and lifelong lung health, but their link to premature adult death from respiratory disease is unclear. This analysis aimed to estimate the association between early childhood LRTI and the risk and burden of premature adult mortality from respiratory disease.

This longitudinal observational cohort study used data collected prospectively by the Medical Research Council National Survey of Health and Development in a nationally representative cohort recruited at birth in March 1946, in England, Scotland, and Wales. It evaluated the association between LRTI during early childhood (age <2 years) and death from respiratory disease from age 26 through 73 years. Early childhood LRTI occurrence was reported by parents or guardians. Cause and date of death were obtained from the National Health Service Central Register. Hazard ratios (HRs) and population attributable risk associated with early childhood LRTI were estimated using competing risks Cox proportional hazards models, adjusted for childhood socioeconomic position, childhood home overcrowding, birthweight, sex, and smoking at age 20–25 years. The researchers compared mortality within the cohort studied with national mortality patterns and estimated corresponding excess deaths occurring nationally during the study period.

5362 participants were enrolled in March, 1946, and 4032 (75%) continued participating in the study at age 20–25 years. 443 participants with incomplete data on early childhood (368 [9%] of 4032), smoking (57 [1%]), or mortality (18 [<1%]) were excluded. 3589 participants aged 26 years (1840 [51%] male and 1749 [49%] female) were included in the survival analyses from 1972 onwards. The maximum follow-up time was 47·9 years. Among 3589 participants, 913 (25%) who had an LRTI during early childhood were at greater risk of dying from respiratory disease by age 73 years than those with no LRTI during early childhood (HR 1·93, 95% CI 1·10–3·37; p=0·021), after adjustment for childhood socioeconomic position, childhood home overcrowding, birthweight, sex, and adult smoking. This finding corresponded to a population attributable risk of 20·4% (95% CI 3·8–29·8) and 179 188 (95% CI 33 806–261 519) excess deaths across England and Wales between 1972 and 2019.

The authors concluded that, in this perspective, life-spanning, nationally representative cohort study, LRTI during early childhood was associated with almost a two times increased risk of premature adult death from respiratory disease, and accounted for one-fifth of these deaths.

What has that got to do with so-called alternative medicine?

Nothing!

Yet, I feel that this study is so remarkable that I need to report on it nonetheless.

What do the findings indicate?

I am not sure. Perhaps they confirm that our genetic makeup is hugely important in determining our health. Thus even the earliest signs of weakness can provide an indication of what might happen in later life.

Whatever the meaning, I find this study fascinating and hope you agree.

The ‘keto diet’ is a currently popular high-fat, low-carbohydrate diet; it limits the intake of glucose which results in the production of ketones by the liver and their uptake as an alternative energy source by the brain. It is said to be an effective treatment for intractable epilepsy. In addition, it is being promoted as a so-called alternative medicine (SCAM) for a wide range of conditions, including:

- weight loss,

- cognitive and memory enhancement,

- type II diabetes,

- cancer,

- neurological and psychiatric disorders.

Now, it has been reported that the ‘keto diet’ may be linked to higher levels of cholesterol and double the risk of cardiovascular events. In the study, researchers defined a low-carb, high-fat (LCHF) diet as 45% of total daily calories coming from fat and 25% coming from carbohydrates. The study, which has so far not been peer-reviewed, was presented Sunday at the American College of Cardiology’s Annual Scientific Session Together With the World Congress of Cardiology.

“Our study rationale came from the fact that we would see patients in our cardiovascular prevention clinic with severe hypercholesterolemia following this diet,” said Dr. Iulia Iatan from the Healthy Heart Program Prevention Clinic, St. Paul’s Hospital, and University of British Columbia’s Centre for Heart Lung Innovation in Vancouver, Canada, during a presentation at the session. “This led us to wonder about the relationship between these low-carb, high-fat diets, lipid levels, and cardiovascular disease. And so, despite this, there’s limited data on this relationship.”

The researchers compared the diets of 305 people eating an LCHF diet with about 1,200 people eating a standard diet, using health information from the United Kingdom database UK Biobank, which followed people for at least a decade. They found that people on the LCHF diet had higher levels of low-density lipoprotein and apolipoprotein B. Apolipoprotein B is a protein that coats LDL cholesterol proteins and can predict heart disease better than elevated levels of LDL cholesterol can. The researchers also noticed that the LCHF diet participants’ total fat intake was higher in saturated fat and had double the consumption of animal sources (33%) compared to those in the control group (16%). “After an average of 11.8 years of follow-up – and after adjustment for other risk factors for heart disease, such as diabetes, high blood pressure, obesity, and smoking – people on an LCHF diet had more than two times higher risk of having several major cardiovascular events, such as blockages in the arteries that needed to be opened with stenting procedures, heart attack, stroke, and peripheral arterial disease.” Their press release also cautioned that their study “can only show an association between the diet and an increased risk for major cardiac events, not a causal relationship,” because it was an observational study, but their findings are worth further investigation, “especially when approximately 1 in 5 Americans report being on a low-carb, keto-like or full keto diet.”

I have to say that I find these findings not in the slightest bit surprising and would fully expect the relationship to be causal. The current craze for this diet is concerning and we need to warn consumers that they might be doing themselves considerable harm.

Other authors have recently pointed out that, within the first 6-12 months of initiating the keto diet, transient decreases in blood pressure, triglycerides, and glycosylated hemoglobin, as well as increases in HDL and weight loss may be observed. However, the aforementioned effects are generally not seen after 12 months of therapy. Despite the diet’s favorable effect on HDL-C, the concomitant increases in LDL-C and very-low-density lipoproteins (VLDL) may lead to increased cardiovascular risks. And another team of researchers has warned that “given often-temporary improvements, unfavorable effects on dietary intake, and inadequate data demonstrating long-term safety, for most individuals, the risks of ketogenic diets may outweigh the benefits.”

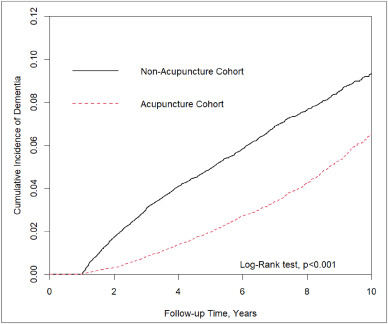

In this retrospective matched-cohort study, Chinese researchers investigated the association of acupuncture treatment for insomnia with the risk of dementia. They collected data from the National Health Insurance Research Database (NHIRD) of Taiwan to analyze the incidence of dementia in patients with insomnia who received acupuncture treatment.

The study included 152,585 patients, selected from the NHIRD, who were newly diagnosed with insomnia between 2000 and 2010. The follow-up period ranged from the index date to the date of dementia diagnosis, date of withdrawal from the insurance program, or December 31, 2013. A 1:1 propensity score method was used to match an equal number of patients (N = 18,782) in the acupuncture and non-acupuncture cohorts. The researchers employed Cox proportional hazards models to evaluate the risk of dementia. The cumulative incidence of dementia in both cohorts was estimated using the Kaplan–Meier method, and the difference between them was assessed through a log-rank test.

Patients with insomnia who received acupuncture treatment were observed to have a lower risk of dementia (adjusted hazard ratio = 0.54, 95% confidence interval = 0.50–0.60) than those who did not undergo acupuncture treatment. The cumulative incidence of dementia was significantly lower in the acupuncture cohort than in the non-acupuncture cohort (log-rank test, p < 0.001).

The researchers concluded that acupuncture treatment significantly reduced or slowed the development of dementia in patients with insomnia.

They could be correct, of course. But, then again, they might not be. Nobody can tell!

As many who are reading these lines know: CORRELATION IS NOT CAUSATION.

But if acupuncture was not the cause for the observed differences, what could it be? After all, the authors used clever statistics to make sure the two groups were comparable!

The problem here is, of course, that they can only make the groups comparable for variables that were measured. These were about 20 parameters mostly related to medication intake and concomitant diseases. This leaves a few hundred potentially relevant variables that were not quantified and could thus not be accounted for.

My bet would be lifestyle: it is conceivable that the acupuncture group had acupuncture because they were generally more health-conscious. Living a relatively healthy life might reduce the dementia risk entirely unrelated to acupuncture. According to Occam’s razor, this explanation is miles more likely that the one about acupuncture.

So, what this study really demonstrates or implies is, I think, this:

- The propensity score method can never be perfect in generating completely comparable groups.

- The JTCM publishes rubbish.

- Correlation is not causation.

- To establish causation in clinical medicine, RCTs are usually the best option.

- Occam’s razor can be useful when interpreting research findings.

I had all but forgotten about these trials until a comment by ‘Mojo’ (thanks Mojo!) reminded me of this article in the JRSM by M.E. Dean. It reviewed these early trials of homeopathy back in 2006. Here are the crucial excerpts:

The homeopath in both trials was a Dr Herrmann, who received a 1-year contract in February 1829 to test homeopathy with the Russian military.3 The first study took place at the Military Hospital in the market town of Tulzyn, in the province of Podolya, Ukraine.4 At the end of 3 months, 164 patients had been admitted, 123 pronounced cured, 18 were convalescing, 18 still sick, and six had died. The homeopathic ward received many gravely ill patients, and the small number of deaths was shown at autopsy to be due to advanced gross pathologies. The results were interesting enough for the Russian government to order Herrmann to the Regional Military Hospital at St Petersburg to take part in a larger trial, supervised by a Dr Gigler. Patients were admitted to an experimental homeopathic ward, for treatment by Herrmann, and comparisons were made with the success rate in the allopathic wards, as happened in Tulzyn. The novelty was Gigler’s inclusion of a ‘no treatment’ ward where patients were not subject to conventional drugging and bleeding, or homeopathic dosing. The untreated patients benefited from baths, tisanes, good nutrition and rest, but also:

‘During this period, the patients were additionally subjects of an innocent deception. In order to deflect the suspicion that they were not being given any medicine, they were prescribed pills made of white breadcrumbs or cocoa, lactose powder or salep infusions, as happened in the homeopathic ward.’3 (page 415)

The ‘no treatment’ patients, in fact, did better than those in both the allopathic and homeopathic wards. The trial had important implications not just for homeopathy but also for the excessive allopathic drugging and bleeding that was prevalent. As a result of the report, homeopathy was banned in Russia for some years, although allopathy was not.

… A well-known opponent of homeopathy, Carl von Seidlitz, witnessed the St Petersburg trial and wrote a hostile report.5 He then conducted a homeopathic drug test in February 1834 at the Naval Hospital in the same city in which healthy nursing staff received homeopathically-prepared vegetable charcoal or placebo in a single-blind cross-over design.6 Within a few months, Armand Trousseau and colleagues were giving placebo pills to their Parisian patients; perhaps in the belief that they were testing homeopathy, and fully aware they were testing a placebo response.7,8 A placebo-controlled homeopathic proving took place in Nuremberg in 1835 and even included a primitive form of random assignment—identical vials of active and placebo treatment were shuffled before distribution.9 Around the same time in England, Sir John Forbes treated a diarrhoea outbreak after dividing his patients into two groups: half received allopathic ‘treatment as usual’ and half got bread pills. He saw no difference in outcome, and when he reported the experiment in 1846 he added that the placebos could just as easily have been homeopathic tablets.10 In 1861, a French doctor gave placebo pills to patients with neurotic symptoms, and his attitude is representative: he called the placebo ‘orthodox homeopathy’, because, as he said, ‘Bread pills or globules of Aconitum 30c or 40c amount to the same thing’.11

References:

Yes, this post is yet again about the harm chiropractors do.

No, I am not obsessed with the subject – I merely consider it to be important.

This is a case presentation of a 44-year-old male who was transferred from another emergency department for left homonymous inferior quadrantanopia noted on an optometrist visit. He reported sudden onset left homonymous hemianopia after receiving a high-velocity cervical spine adjustment at a chiropractor appointment for chronic neck pain a few days prior.

The CT angiogram of the head and neck revealed bilateral vertebral artery dissection at the left V2 and right V3 segments. MRI brain confirmed an acute infarct in the right medial occipital lobe. His right PCA stroke was likely embolic from the injured right V3 but possibly from the left V2 as well. As the patient reported progression from a homonymous hemianopia to a quadrantanopia, he likely had a migrating embolus.

The authors discussed that arterial dissection accounts for about 2% of all ischemic strokes, but maybe between 8–25% in patients less than 45 years old. Vertebral artery dissection (VAD) can result from trauma from sports, motor vehicle accidents, and chiropractor neck manipulations to violent coughing/sneezing.

It is estimated that 1 in 20,000 spinal manipulation results in vertebral artery aneurysm/dissection. Patients who have multiple chronic conditions are reporting higher use of so-called alternative medicine (SCAM), including chiropractic manipulation. Education about the association between VAD and chiropractor maneuvers can be beneficial to the public as these are preventable acute ischemic strokes. In addition, VAD symptoms can be subtle and patients presenting to chiropractors may have distracting pain masking their deficits. Evaluating for appropriateness of cervical manipulation in high‐risk patients and detecting early clinical signs of VAD by chiropractors can be beneficial in preventing acute ischemic strokes in young patients.

Here we have a rare instance where the physicians who treated the chiro-victim were sufficiently motivated to present their findings and document them in the medical literature. Their report was published in 2021 as an abstract in conference proceedings. In other words, the report is not easy to find. Even though two years have passed, the full article does not seem to have emerged, and chances are that it will never be published.

The points I am trying to make are as follows:

- Complications after chiropractic manipulation do happen and are probably much more frequent than chiros want us to believe.

- They are only rarely reported in the medical literature because the busy clinicians who end up treating the victims do not consider this a priority and because many cases are settled in or out of court.

- Normally, it would be the ethical/moral duty of the chiros who have inflicted the damage to do the reporting.

- Yet, they seem too busy ripping off more patients by doing neck manipulations that do more harm than good.

- And then they complain that the evidence is insufficient!!!

It has been reported that a young woman’s visit to a chiropractor left her unable to walk due to a torn artery.

Mariah Bond, 29, went to visit a chiropractor in the hope to get some relief from her neck pain. During the appointment, the chiropractor quickly twisted her neck from side to side. “It cracked both ways and I’d seen chiropractor videos so I thought it was normal but when I stood up I got super dizzy,” Mariah recalled. Next, Mariah started profusely vomiting and her hand began to tingle. Then she was rushed to a hospital.

It took a few hours before the doctors could find the diagnosis. “I was still throwing up constantly, it was non-stop. I couldn’t open my eyes because if I did I’d start throwing up because I was so dizzy,” Mariah said. “I was transferred via ambulance to another hospital where they did a CT scan and confirmed that I was having a stroke.”

It turned out that Mariah’s chiropractor dissected an artery in her neck which then limited the blood supply to the brain. Mariah was kept in the hospital for five days while her condition was monitored. During that time, she was left unable to walk. But slowly she did become able to rely on a zimmer frame to get around. “I couldn’t walk properly or correctly use my hands to eat, it was like I was a child. It was very weird. My brain was there but I couldn’t do it,” she stated. “My first stroke was a cerebral stroke and they were saying that I probably had a mini-stroke as I was having weird feelings in my legs. They were very confused because that wasn’t common with the stroke I had, so they said that I probably had two.”

Within a fortnight, Mariah was able to walk again but had to have physiotherapy for two months before she could return to work. After her last CT scan, she received the good news that the dissected vessel had completely healed. She said: “I was very strong-willed at the time because everyone was telling me how well I was handling this. I think my husband was more scared than I was, poor thing.”

Mariah has vowed never to visit a chiropractor again and is doing her best to raise awareness of the damage they can cause. “I was shocked because I’m so young and you don’t really hear about young people having strokes, especially from the chiropractor. I’m pretty paranoid with my neck now. I know I probably shouldn’t be but sometimes if I have a weird feeling in my head, it would probably be called PTSD, I automatically start thinking am I having a stroke? I start freaking out. I’d tell people not to go to a chiropractor. I’ve already told a million people not to do it. Just don’t go or at least don’t let them do your neck.”

____________________________

I would be surprised if this case ever got written up as a proper case report and published in a medical journal. We did a survey years ago where we found over 35 cases of severe complications after chiropractic in the UK within a period of 12 months. The most amazing result was that none of these cases had been published. In other words, under-reporting was precisely 100%.

Mariah’s case might be a true rarety, or it might be a fairly common event. It might be a most devastating occurrence, or there could be far worse events.

We simply do not know because under-reporting is huge.

Meanwhile, chiropractors – the professionals who should long have made sure that under-reporting becomes minimal or non-existent – claim that there is no evidence that strokes happen at all or regularly or often. They can do this because the medical literature seems to confirm their opinion. The only reporting system that seems to exist, the “chiropractic patient incident reporting and learning system” (CPiRLS), is for several reasons woefully inadequate and also plagued by under-reporting.

So, what advice can I possibly give to consumers in such a situation? I feel that the only thing one can recommend is to

stay well clear of chiropractors

until they finally present us with sufficient and convincing data.

Homeopathic remedies are highly diluted formulations without proven clinical benefits, traditionally believed not to cause adverse events. Nonetheless, published literature reveals severe local and non-liver-related systemic side effects. This paper presents the first series on homeopathy-related severe drug-induced liver injury (DILI) from a single center.

A retrospective review of records from January 2019 to February 2022 identified 9 patients with liver injury attributed to homeopathic formulations. Competing causes were comprehensively excluded. Chemical analysis was performed on retrieved formulations using triple quadrupole gas chromatography-mass spectrometry and inductively coupled plasma atomic emission spectroscopy.

Males predominated with a median age of 54 years. The most typical clinical presentation was acute hepatitis, followed by acute on chronic liver failure. All patients developed jaundice, and ascites were notable in one-third of the patients. Five patients had underlying chronic liver disease. COVID-19 prevention was the most common indication for homeopathic use. Probable DILI was seen in 77.8%, and hepatocellular injury predominated (66.7%). Four (44.4%) patients died (3 with chronic liver disease) at a median follow-up of 194 days. Liver histopathology showed necrosis, portal and lobular neutrophilic inflammation, and eosinophilic infiltration with cholestasis. A total of 29 remedies were consumed between 9 patients, and 15 formulations were analyzed. Toxicology revealed industrial solvents, corticosteroids, antibiotics, sedatives, synthetic opioids, heavy metals, and toxic phyto-compounds, even in ‘supposed’ ultra-dilute formulations.

The authors concluded that homeopathic remedies potentially result in severe liver injury, leading to death in those with underlying liver disease. The use of mother tinctures, insufficient dilution, poor manufacturing practices, adulteration and contamination, and the presence of direct hepatotoxic herbals were the reasons for toxicity. Physicians, the public, and patients must realize that Homeopathic drugs are not ‘gentle placebos.’

Over a decade ago, we published a systematic review entitled “Adverse effects of homeopathy: a systematic review of published case reports and case series”:

Aim: The aim of this systematic review was to critically evaluate the evidence regarding the adverse effects (AEs) of homeopathy.

Method: Five electronic databases were searched to identify all relevant case reports and case series.

Results: In total, 38 primary reports met our inclusion criteria. Of those, 30 pertained to direct AEs of homeopathic remedies; and eight were related to AEs caused by the substitution of conventional medicine with homeopathy. The total number of patients who experienced AEs of homeopathy amounted to 1159. Overall, AEs ranged from mild-to-severe and included four fatalities. The most common AEs were allergic reactions and intoxications. Rhus toxidendron was the most frequently implicated homeopathic remedy.

Conclusion: Homeopathy has the potential to harm patients and consumers in both direct and indirect ways. Clinicians should be aware of its risks and advise their patients accordingly.

It caused an outcry from fans of homeopathy who claimed that one cannot insist that homeopathic remedies are ineffective because they contain no active ingredient, while also arguing that they cause severe adverse effects. In a way, they were correct: homeopathic remedies are useless even at causing adverse effects. But this applies only to remedies that are manufactured correctly and that are highly dilute. The trouble is that quality control in homeopathy often seems to be less than adequate. And this is how adverse effects can happen!

The new article from India is an important addition to the literature providing more valuable information about the risks of homeopathy. Its authors were able to do chemical analyses of some of the remedies and could thus show what the reasons for the liver injuries were. The article provides an essential caution for those who delude themselves by assuming that homeopathy is harmless. In fact, the remedies can cause severe problems. But, as we have discussed regularly on this blog, the far greater risk in homeopathy is not the remedy but the homeopath and his/her all too often incompetent advice to patients.

The concept of ultra-processed food (UPF) was initially developed and the term coined by the Brazilian nutrition researcher Carlos Monteiro, with his team at the Center for Epidemiological Research in Nutrition and Health (NUPENS) at the University of São Paulo, Brazil. They argue that “the issue is not food, nor nutrients, so much as processing,” and “from the point of view of human health, at present, the most salient division of food and drinks is in terms of their type, degree, and purpose of processing.”

Examples of UPF include:

- Carbonated soft drinks,

- Sweet, fatty or salty packaged snacks,

- Candies (confectionery),

- Mass-produced packaged breads and buns,

- Cookies (biscuits),

- Pastries,

- Cakes and cake mixes,

- Margarine and other spreads,

- Sweetened breakfast cereals,

- Sweetened fruit yoghurt and energy drinks,

- Powdered and packaged instant soups, noodles, and desserts,

- Pre-prepared meat, cheese, pasta and pizza dishes,

- Poultry and fish nuggets and sticks,

- Sausages, burgers, hot dogs, and other reconstituted meat products,

Ultra-processed food is bad for our health! This message is clear and has been voiced so many times – not least by proponents of so-called alternative medicine (SCAM) – that most people should now understand it.

But how bad?

And what diseases does UPF promote?

How strong is the evidence?

I did a quick Medline search and was overwhelmed by the amount of research on this subject. In 2022 alone, there were more than 2000 publications! Here are the conclusions from just a few recent studies on the subject:

- Higher intake of UPFs was associated with higher incidence of Crohn’s disease, but not ulcerative colitis. In individuals with a pre-existing diagnosis of inflammatory bowel disease, consumption of UPFs was significantly higher compared to controls, and was associated with an increased need for IBD-related surgery. Further studies are needed to address the impact of UPF intake on disease pathogenesis, and outcomes.

- In this prospective cohort study, higher consumption of UPF was associated with higher risk of dementia, while substituting unpr2ocessed or minimally processed foods for UPF was associated lower risk of dementia.

- In almost all countries and age groups, increases in the dietary share of ultraprocessed foods were associated with increases in energy density and free sugars and decreases in fiber, suggesting that ultraprocessed food consumption is a potential determinant of obesity in children and adolescents.

- Higher ultraprocessed foods consumption was independently associated with a higher risk of incident chronic kidney disease in a general population.

- These data suggest that a consistent intake of ultra-processed foods over time is needed to impact nutritional status and body composition of children and adolescents.

- This meta-analysis suggests that high consumption of UPF, sugar-sweetened beverages, artificially sweetened beverages, processed meat, and processed red meat might increase all-cause mortality, while breakfast cereals might decrease it.

- The consumption of ultraprocessed foods represents a significant cause of premature death in Brazil.

- Available evidence suggests that UPFs may increase cancer risk via their obesogenic properties as well as through exposure to potentially carcinogenic compounds such as certain food additives and neoformed processing contaminants.

- The high consumption of UPF, almost more than 10% of the diet proportion, could increase the risk of developing type 2 diabetes in adult individuals.

Don’t get me wrong: this is not a systematic review of the subject. I am merely trying to give a rough impression of the research that is emerging. A few thoughts seem nonetheless appropriate.

- The research on this subject is intense.

- Even though most studies disclose associations and not causal links, there is in my view no question that UPF aggravates many diseases.

- The findings of the current research are highly consistent and point to harm done to most organs.

- Even though this is a subject on which advocates of SCAM are exceedingly keen, none of the research I saw was conducted by SCAM researchers.

- The view of many SCAM proponents that conventional medicine does not care about nutrition is clearly not correct.

- Considering how unhealthy UPF is, there seems to be a lack of effective education and action aimed at preventing the harm UPF does to us.