methodology

Musculoskeletal disorders (MSDs) are highly prevalent, burdensome, and putatively associated with an altered human resting muscle tone (HRMT). Osteopathic manipulative treatment (OMT) is commonly and effectively applied to treat MSDs and reputedly influences the HRMT. Arguably, OMT may modulate alterations in HRMT underlying MSDs. However, there is sparse evidence even for the effect of OMT on HRMT in healthy subjects.

A 3 × 3 factorial randomized trial was performed to investigate the effect of myofascial release (MRT), muscle energy (MET), and soft tissue techniques (STT) on the HRMT of the corrugator supercilii (CS), superficial masseter (SM), and upper trapezius muscles (UT) in healthy subjects in Hamburg, Germany. Participants were randomised into three groups (1:1:1 allocation ratio) receiving treatment, according to different muscle-technique pairings, over the course of three sessions with one-week washout periods. We assessed the effect of osteopathic techniques on muscle tone (F), biomechanical (S, D), and viscoelastic properties (R, C) from baseline to follow-up (primary objective) and tested if specific muscle-technique pairs modulate the effect pre- to post-intervention (secondary objective) using the MyotonPRO (at rest). Ancillary, we investigate if these putative effects may differ between the sexes. Data were analysed using descriptive (mean, standard deviation, and quantiles) and inductive statistics (Bayesian ANOVA). 59 healthy participants were randomised into three groups and two subjects dropped out from one group (n = 20; n = 20; n = 19–2). The CS produced frequent measurement errors and was excluded from analysis. OMT significantly changed F (−0.163 [0.060]; p = 0.008), S (−3.060 [1.563]; p = 0.048), R (0.594 [0.141]; p < 0.001), and C (0.038 [0.017]; p = 0.028) but not D (0.011 [0.017]; p = 0.527). The effect was not significantly modulated by muscle-technique pairings (p > 0.05). Subgroup analysis revealed a significant sex-specific difference for F from baseline to follow-up. No adverse events were reported.

The authors concluded that OMT modified the HRMT in healthy subjects which may inform future research on MSDs. In detail, MRT, MET, and STT reduced the muscle tone (F), decreased biomechanical (S not D), and increased viscoelastic properties (R and C) of the SM and UT (CS was not measurable). However, the effect on HRMT was not modulated by muscle–technique interaction and showed sex-specific differences only for F.

I think that this study merits a few comments:

- It seems unsurprising that manual manipulation can relax muscles.

- The blinding of the volunteers was compromised because the participants were osteopathy students able to distinguish between the different interventions.

- The mechanisms underlying these reported changes in HRMT following OMT are unclear.

- The effects do not seem to be treatment-specific.

- The treatments used are not typical for osteopathy.

- Manual techniques are loosely defined or standardized.

- The duration of the effect is unknown but probably short.

- The size of the effect is small.

- The clinical relevance of the effect is doubtful.

The present study was conducted to evaluate the effect of date palm on the sexual function of infertile couples. It was designed as a double-blind, placebo-controlled clinical trial conducted on infertile women and their husbands who referred to infertility clinics in Iran in 2019.

The intervention group was given a palm date capsule and the control group was given a placebo. Data were collected through female sexual function index and International Index of Erectile Function.

The total score of sexual function of females in the intervention group increased significantly from 21.06 ± 2.58 to 27.31 ± 2.59 (P < 0.0001). Also, other areas of sexual function in females (arousal, orgasm, lubrication, pain during intercourse, satisfaction) in the intervention group showed a significant increase compared to females in the control group, which was statistically significant (P < 0.0001).

All areas of male sexual function (erectile function, orgasmic function, sexual desire, intercourse satisfaction and overall satisfaction) significantly increased in the intervention group compared to the control group (P < 0.0001).

The authors concluded that the present study revealed that 1-month consumption of date palm has a positive impact on the sexual function of infertile couples.

In an attempt to explain the rational for this study, the authors state that, since ancient times, date palm has been used in Greece, China and Egypt to treat infertility and increase sexual desire and fertility in females. Rasekh indicated that Palm Pollen is effective in sperm parameters of infertile men. Administering date palm to male rats and measuring the sexual parameters of rats showed an improvement in their sexual function. Studies on animals have shown its effect on the parameters of semen analysis in male animals and increasing hormones.

So, the trial was what might call a ‘long shot’, even a very long one. But that does not render its findings less interesting. If the results could be confirmed, they would certainly have considerable significance.

But can they be confirmed?

I have some doubts.

Two things are remarkable, in my view.

- The study only had subjective endpoints.

- There was as good as no placebo effect in the control group.

How can this be?

One explanation might be that the verum and the placebo capsules were easily identified by their taste of other features. This would then lead to many patients being ‘deblinded’; in other words, the patients on verum would have known and expected to experience an effect, while the patients on placebo would have also known and be disappointed thus not even experiencing a placebo response.

This might be an apt reminder for trialists to include a check of the success of blinding in their list of outcome measures.

Bioenergy (or energy healing) therapies are among the popular alternative treatment options for many diseases, including cancer. Many studies deal with the advantages and disadvantages of bioenergy therapies as an addition to established treatments such as chemotherapy, surgery, and radiation in the treatment of cancer. However, a systematic overview of this evidence is thus far lacking. For this reason, German authors reviewed and critically examined the evidence to determine what benefits the treatments have for patients.

In June 2022, a systematic search was conducted searching five electronic databases (Embase, Cochrane, PsychInfo, CINAHL and Medline) to find studies concerning the use, effectiveness, and potential harm of bioenergy therapies including the following modalities:

- Reiki,

- Therapeutic Touch,

- Healing Touch,

- Polarity Therapy.

From all 2477 search results, 21 publications with a total of 1375 patients were included in this systematic review. The patients treated with bioenergy therapies were mainly diagnosed with breast cancer. The main outcomes measured were:

- anxiety,

- depression,

- mood,

- fatigue,

- quality of life (QoL),

- comfort,

- well-being,

- neurotoxicity,

- pain,

- nausea.

The studies were predominantly of moderate quality and, for the most part, found no effect. In terms of QoL, pain, and nausea, there were some positive short-term effects of the interventions, but no long-term differences were detectable. The risk of side effects from bioenergy therapies appears to be relatively small.

The authors concluded that considering the methodical limitations of the included studies, studies with high study quality could not find any difference between bioenergy therapies and active (placebo, massage, RRT, yoga, meditation, relaxation training, companionship, friendly visit) and passive control groups (usual care, resting, education). Only studies with a low study quality were able to show significant effects.

Energy healing is as popular as it is implausible. What these ‘healers’ call ‘energy’ is not how it is defined in physics. It is an undefined, imagined entity that exists only in the imagination of its proponents. So why should it have an effect on cancer or any other condition?

My team conducted 2 RCT of energy healing (pain and warts); both failed to show positive effects. And here is what I stated in my recent book about energy healing for any ailment:

Energy healing is an umbrella term for a range of paranormal healing practices. Their common denominator is the belief in a mystical ‘energy’ that can be used for therapeutic purposes.

- Forms of energy healing have existed in many ancient cultures. The ‘New Age’ movement has brought about a revival of these ideas, and today energy healing systems are amongst the most popular alternative therapies in the US as well as in many other countries. Popular forms of energy healing include those listed above. Each of these are discussed and referenced in separate chapters of this book.

- Energy healing relies on the esoteric belief in some form of ‘energy’ which is distinct from the concept of energy understood in physics and refers to some life force such as chi in Traditional Chinese Medicine, or prana in Ayurvedic medicine.

- Some proponents employ terminology from quantum physics and other ‘cutting-edge’ science to give their treatments a scientific flair which, upon closer scrutiny, turns out to be but a veneer of pseudo-science.

- The ‘energy’ that energy healers refer to is not measurable and lacks biological plausibility.

- Considering its implausibility, energy healing has attracted a surprisingly high level of research activity. Its findings are discussed in the respective chapters of each of the specific forms of energy healing.

- Generally speaking, the methodologically best trials of energy healing fail to demonstrate that it generates effects beyond placebo.

- Even though energy healing is per se harmless, it can do untold damage, not least because it significantly undermines rational thought in our societies.

As you can see, I do not entirely agree with my German friends on the issue of harm. I think energy healing is potentially dangerous and should be discouraged.

Have you ever wondered how good or bad the education of chiropractors and osteopaths is? Well, I have – and this new paper promises to provide an answer.

The aim of this study was to explore Australian chiropractic and osteopathic new graduates’ readiness for transition to practice concerning their clinical skills, professional behaviors, and interprofessional abilities. Phase 1 explored final-year students’ self-perceptions, and this part uncovered their opinions after 6 months or more in practice.

Interviews were conducted with a self-selecting sample of phase 1 participant graduates from 2 Australian chiropractic and 2 osteopathic programs. Results of the thematic content analysis of responses were compared to the Australian Chiropractic Standards and Osteopathic Capabilities, the authority documents at the time of the study.

Interviews from graduates of 2 chiropractic courses (n = 6) and 2 osteopathic courses (n = 8) revealed that the majority had positive comments about their readiness for practice. Most were satisfied with their level of clinical skills, verbal communication skills, and manual therapy skills. Gaps in competence were identified in written communications such as case notes and referrals to enable interprofessional practice, understanding of professional behaviors, and business skills. These identified gaps suggest that these graduates are not fully cognizant of what it means to manage their business practices in a manner expected of a health professional.

The authors concluded that this small study into clinical training for chiropractic and osteopathy suggests that graduates lack some necessary skills and that it is possible that the ideals and goals for clinical education, to prepare for the transition to practice, may not be fully realized or deliver all the desired prerequisites for graduate practice.

Their conclusions in the actual paper finish with these sentences, in the main, graduate participants and the final year students were unable to articulate what professional behaviors were expected of them. The identified gaps suggest these graduates are not fully cognizant of what it means to manage their business practices in a manner expected of a health professional.

In several ways, this is a remarkable paper – remarkably poor, I hasten to add. Apart from the fact that its sample size was tiny and the response rate was low, it has many further limitations. Most notably, the clinical skills, professional behaviors, and interprofessional abilities were not assessed. All the researchers did was ask the participants how good or bad they were at these skills. Is this method going to generate reliable evidence? I very much doubt it!

Imagine, these guys have just paid tidy sums for their ‘education’ and they have no experience to speak of. Are they going to be in a good position to critically evaluate their abilities? No, I fear not!

Considering these flaws and the fact that chiropractors and osteopaths are not exactly known for their skills of critical thinking, I find it amazing that important deficits in their abilities nevertheless emerge. If I had to formulate a conclusion from all this, I might therefore suggest this:

A dismal study seems to suggest that chiropractic and osteopathic schooling is dismal.

PS

Come to think of it, there might be another fitting option:

Yet another team of chiro- and osteos demonstrate that they don’t know how to do science.

This randomized clinical trial (RCT) tested whether acupuncture is effective for the prevention of chronic tension-type headaches (CTTH). The researchers recruited 218 participants who were diagnosed with CTTH.

- The participants in the intervention group received 20 sessions of true acupuncture (TA group) over 8 weeks. The acupuncture treatments were standardized across participants, and each acupuncture site was needled to achieve deqi sensation. Each treatment session lasted 30 minutes.

- The participants in the control group received the same sessions and treatment frequency of superficial acupuncture (SA group)—defined as a type of sham control by avoiding deqi sensation at each acupuncture site.

The main outcome measure was the responder rate at 16 weeks after randomization. Followed-up was 32 weeks. A responder was defined as a participant who reported at least a 50% reduction in the monthly number of headache days (MHDs).

The responder rate was 68.2% in the TA group (n=110) versus 48.1% in the SA group (n=108) at week 16 (odds ratio, 2.65; 95%CI, 1.5 to 4.77; p<0.001); and 68.2% in the TA group versus 50% in the SA group at week 32 (odds ratio, 2.4; 95%CI, 1.36 to 4.3; p<0.001). The reduction in MHDs was 13.1±9.8 days in the TA group versus 8.8±9.6 days in the SA group at week 16 (mean difference, 4.3 days; 95%CI, 2.0 to 6.5; p<0.001), and the reduction was 14±10.5 days in the TA group versus 9.5±9.3 days in the SA group at week 32 (mean difference, 4.5 days; 95%CI, 2.1 to 6.8; p<0.001). Four mild adverse events were reported; three in the TA group versus one in the SA group.

The authors concluded that the 8-week TA treatment was effective for the prophylaxis of CTTH. Further studies might focus on the cost-effectiveness of the treatment.

“Our study showed that deqi sensation could enhance the effect of acupuncture in the treatment of chronic TTH, and the effect of acupuncture lasted at least 6 months when the treatment was stopped,” said co-investigator Ying Li, MD, PhD, The Third Hospital/Acupuncture and Tuina School, Chengdu University of Traditional Chinese Medicine, Chengdu, China.

Why am I not convinced?

Assuming that all the findings are correctly reported, the study does not at all show that the treatment was effective. It merely demonstrates that those patients who knew that were receiving TA told the researcher that they improved more than those who knew they has sham acupuncture. The difference in outcomes is not in the least surprising: patients’ knowledge of having had the verum leads to a placebo effect and to social desirability (patients giving the researchers positive responses simply because they were thankful for being looked after). Patients’ knowledge of having had the sham treatment leads to disappointment and thus worse outcomes.

But this is not the only reason why I am skeptical about this study. The authors claim they achieved deqi at every treatment. That is 20 treatments in 110 patients or 2 200 deqis! I think someone might be telling porkies here. Deqi cannot reliably be elicited on every single occasion. I, therefore, feel that perhaps the authors of this trial were a bit more than generous when writing up their study, and I am reminded of the recent report claiming that more than 80% of clinical trial data from China are fabricated.

Sixty thousand people are diagnosed with Parkinson’s disease (PD) each year, making it the second most common neurodegenerative disorder. PD results in a variety of gait disturbances, including muscular rigidity and decreased range of motion (ROM), that increase the fall risk of those afflicted. Osteopathic manipulative treatment (OMT) might address the somatic dysfunction associated with neurodegeneration in PD. Moreover, osteopathic cranial manipulative medicine (OCMM) might improve gait performance by improving circulation to the affected nervous tissue. Are these ideas realistic hypotheses or merely wishful thinking?

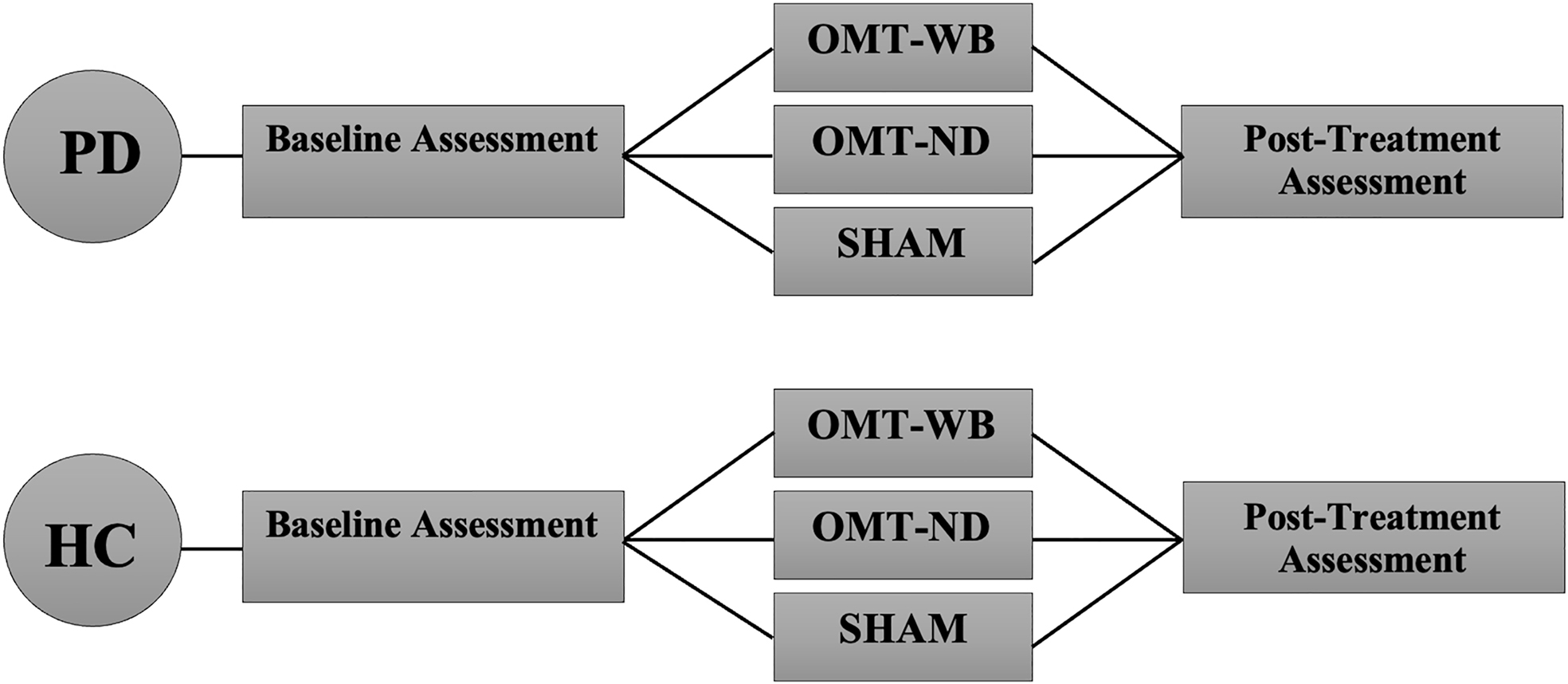

This study aimed to determine whether a single session of OMT or OMT + OCMM can improve the gait of individuals with PD by addressing joint restrictions in the sagittal plane and by increasing ROM in the lower limb. It was designed as a two-group, randomized controlled trial in which individuals with PD (n=45) and age-matched healthy control participants (n=45) were recruited from the community. PD participants were included if they were otherwise healthy, able to stand and walk independently, had not received OMT or physical therapy (PT) within 30 days of data collection, and had idiopathic PD in Hoehn and Yahr stages 1.0-3.0.

PD participants were randomly assigned to one of three experimental treatment protocols:

- a ‘whole-body’ OMT protocol (OMT-WB), which included OMT and OCMM techniques;

- a ‘neck-down’ OMT protocol (OMT-ND), including only OMT techniques;

- and a sham treatment protocol.

Control participants were age-matched to a PD participant and were provided the same OMT experimental protocol.

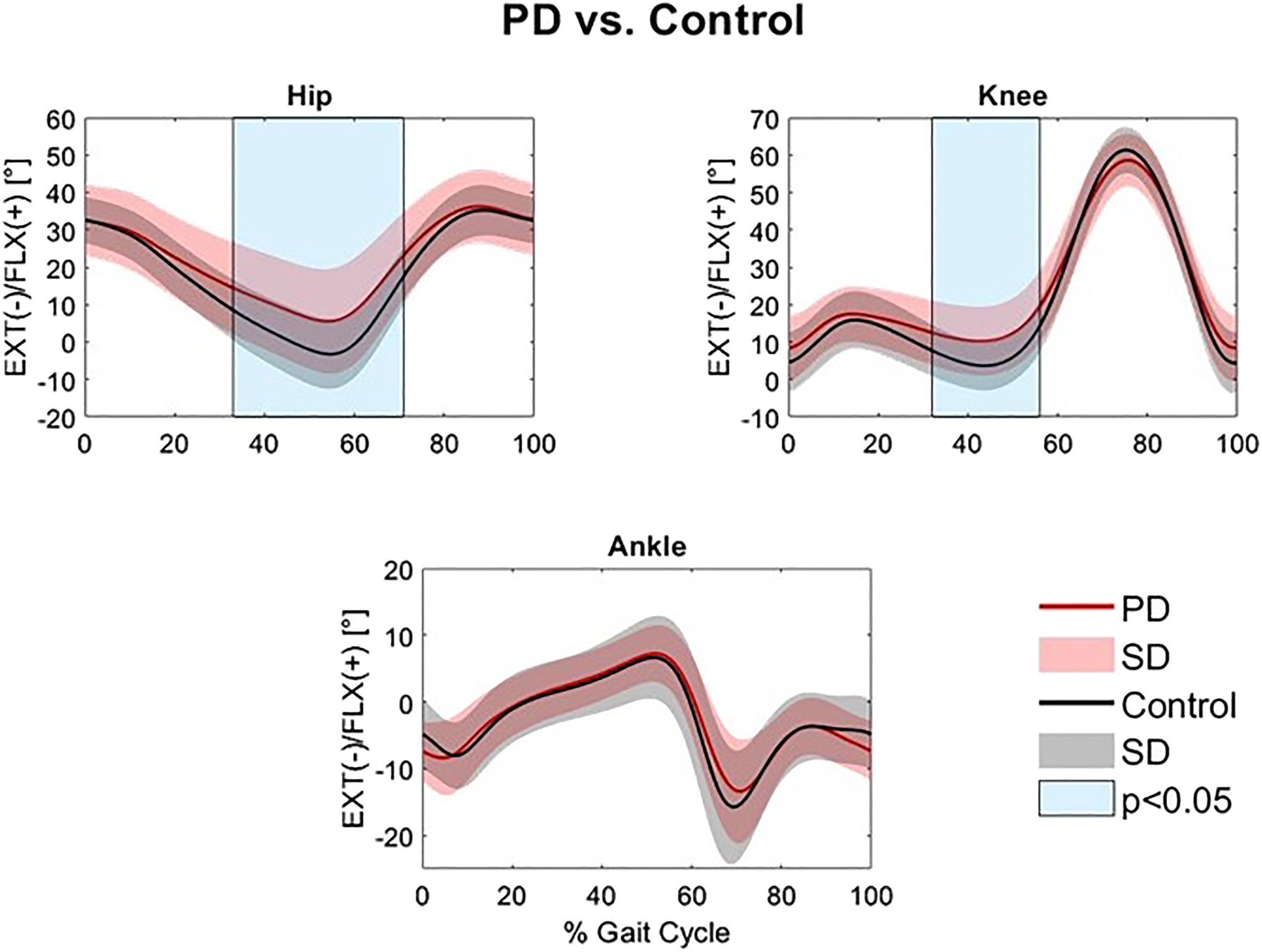

An 18-camera motion analysis system was utilized to capture 3-dimensional (3D) position data in a treadmill walking trial before and after the assigned treatment protocol. Pretreatment and posttreatment hip, knee, and ankle ROM were compared with paired t-tests, and joint angle waveforms during the gait cycle were analyzed with statistical parametric mapping (SPM), which is a type of waveform analysis.

Individuals with PD had significantly reduced hip and knee extension in the stance phase compared to controls (32.9-71.2% and 32.4-56.0% of the gait cycle, respectively). Individuals with PD experienced a significant increase in total sagittal hip ROM (p=0.038) following a single session of the standardized OMT-WB treatment protocol. However, waveform analysis found no significant differences in sagittal hip, knee, or ankle angles at individual points of the gait cycle following OMT-WB, OMT-ND, or sham treatment protocols.

The authors concluded that the increase in hip ROM observed following a single session of OMT-WB suggests that OCMM in conjunction with OMT may be useful for improving gait kinematics in individuals with PD. Longitudinal studies over multiple visits are needed to determine the long-term effect of regular OMT and OMT+OCMM treatments on Parkinsonian gait characteristics.

The study has many significant limitations. For instance, the hypotheses tested lack plausibility and the outcome measures are of doubtful validity. Most importantly, the observed effects are only short term and their clinical relevance is highly questionable.

Earlier this year, I started the ‘WORST PAPER OF 2022 COMPETITION’. As a competition without a prize is no fun, I am offering the winner (that is the lead author of the winning paper) one of my books that best fits his/her subject. I am sure this will overjoy him or her.

And how do we identify the winner? I will continue blogging about nominated papers (I hope to identify about 10 in total), and towards the end of the year, I let my readers decide democratically.

In this spirit of democratic voting, let me suggest to you ENTRY No 8 (it is so impressive that I must show you the unadulterated abstract):

Introduction

Female sexual dysfunction (FSD) seriously affects the quality of life of women. However, most women do not have access to effective treatment.

Aim

This study aimed to determine the feasibility and effectiveness of the use of acupuncture in FSD treatment based on existing clear acupuncture protocol and experience-supported face-to-face therapy.

Methods

A retrospective analysis was performed on 24 patients with FSD who received acupuncture from October 2018 to February 2022. The Chinese version of the female sexual function index , subjective sensation, sexual desire, sexual arousal, vaginal lubrication, orgasm, sexual satisfaction, and dyspareunia scores were compared before and after the treatment in all 24 patients.

Main Outcome Measure

A specific female sexual function index questionnaire was used to assess changes in female sexual function before and after the acupuncture treatment.

Results

In this study, the overall treatment improvement rate of FSD was 100%. The Chinese version of the female sexual function index total score, sexual desire score, sexual arousal score, vaginal lubrication score, orgasm score, sexual satisfaction score, and dyspareunia score during intercourse were significantly different before and after the treatment (P < .05). Consequently, participants reported high levels of satisfaction with acupuncture. This study indicates that acupuncture could be a new and effective technique for treating FSD. The main advantages of this study are its design and efficacy in treating FSD. To the best of our knowledge, this is the first study to evaluate the efficacy of acupuncture in the treatment of FSD using the female sexual function index scale from 6 dimensions. The second advantage is that the method used (ie, the nonpharmacological method) is simple, readily available, highly safe with few side effects, and relatively inexpensive with high patient satisfaction. However, limitations include small sample size and lack of further detailed grouping, pre and post control study of patients, blank control group, and pre and post control study of sex hormones.

Conclusion

Acupuncture can effectively treat FSD from all dimensions with high safety, good satisfaction, and definite curative effect, and thus, it is worthy of promotion and application.

My conclusion is very different: acupuncture can effectively kill any ability for critical thinking.

I hardly need to list the flaws of this paper – they are all too obvious, e.g.:

- there is no control group; the results might therefore be due to a host of factors that are unrelated to acupuncture,

- the trial was too small to allow far-reaching conclusions,

- the study does not tell us anything about the safety of acupuncture.

The authors call their investigation a ‘pilot study’. Does that excuse the flimsiness of their effort? No! A pilot study cannot draw conclusions such as the above.

What’s the harm? you might ask; nobody will ever read such rubbish and nobody will have the bizarre idea to use acupuncture for treating FSD. I’m afraid you would be wrong to argue in this way. The paper already got picked up by THE DAILY MAIL in an article entitled “Flailing libido? Acupuncture could help boost sex drive, scientists say” which was as devoid of critical thinking as the original study. Thus we can expect that hundreds of desperate women are already getting needled and ripped off as we speak. And in any case, offensively poor science is always harmful; it undermines public trust in research (and it renders acupuncture research the laughing stock of serious scientists).

This study aimed to evaluate the efficacy of Persian barley water in controlling the clinical outcomes of hospitalized COVID-19 patients. It was designed as a single-blind, add-on therapy, randomized controlled clinical trial and conducted in Shiraz, Iran, from January to March 2021. One hundred hospitalized COVID-19 patients with moderate disease severity were randomly allocated to receive routine treatment (per local protocols) with or without 250 ml of Persian barley water (PBW) daily for two weeks. Clinical outcomes and blood tests were recorded before and after the study period. Multivariable modeling was applied using Stata software for data analysis.

The length of hospital stay (LHS) was 4.5 days shorter in the intervention group than the control group regardless of history of cigarette smoking (95% confidence interval: -7.22, -1.79 days). Also, body temperature, erythrocyte sedimentation rate (ESR), C-reactive protein (CRP), and creatinine significantly dropped in the intervention group compared to the control group. No adverse events related to PBW occurred.

The authors from the Department of Traditional Medicine, Shiraz University of Medical Sciences, Shiraz, Iran, concluded that this clinical trial demonstrated the efficacy of PBW in minimizing the LHS, fever, and levels of ESR, CRP, and creatinine among hospitalized COVID-19 patients with moderate disease severity. More robust trials can help find safe and effective herbal formulations as treatments for COVID-19.

I must admit, I did not know about PBW. The authors explain that PBW is manufactured from Hordeum vulgare via a specific procedure. According to recent studies, barley is rich in constituents such as selenium, tocotrienols, phytic acid, catechin, lutein, vitamin E, and vitamin C; these compounds are responsible for their antioxidant and anti-inflammatory properties. Barley grains also have immune-stimulating effects, antioxidant properties, protective effects on the liver and digestive systems, anti-cancer effects, and act to reduce uric acid levels.

But even if these effects would constitute a plausible mechanism for explaining the observed effects (which I do not think they do), the study itself is more than flimsy.

I do not understand why researchers investigating an important issue do not make sure that their study is as rigorous as possible.

- Why not use an adequately large sample size?

- Why not employ a placebo?

- Why not double-blind?

- Why not report the most important outcome, i.e. mortality?

As it stands, nobody will take this study seriously. Perhaps this is a good thing – but perhaps PBW does have positive effects (I know it’s a long shot) and, in this case, a poor-quality study would only prevent an effective therapy come to light.

According to the authors of this study, research is lacking regarding osteopathic approaches in treating polycystic ovary syndrome (PCOS), one of the prevailing endocrine abnormalities in reproductive-aged women. Limited movement of pelvic organs can result in functional and structural deficits, which can be resolved by applying visceral manipulation (VM). Already with these two introductory sentences, I have problems. But for the moment, we can leave this aside and have a look at their trial.

The study was aimed at analyzing the effect of VM on dysmenorrhea, irregular, delayed, and/or absent menses, and premenstrual symptoms in PCOS patients.

Thirty Egyptian women with PCOS, with menstruation-related complaints and free from systematic diseases and/or adrenal gland abnormalities, prospectively participated in a single-blinded, randomized controlled trial. They were recruited from the women’s health outpatient clinic in the faculty of physical therapy at Cairo University, with an age of 20-34 years, and a body mass index (BMI) ≥25, <30 kg/m2. Patients were randomly allocated into two equal groups (15 patients); the control group received a low-calorie diet for 3 months, and the study group received the same hypocaloric diet plus VM to the pelvic organs and their related structures, according to assessment findings, for eight sessions over 3 months. Evaluations for body weight, BMI, and menstrual problems were done by weight-height scale, and menstruation-domain of Polycystic Ovary Syndrome Health-Related Quality of Life Questionnaire (PCOSQ), respectively, at baseline and after 3 months of treatments.

A total of 30 patients were included, with baseline mean age, weight, BMI, and menstruation domain score of 27.5 ± 2.2 years, 77.7 ± 4.3 kg, 28.6 ± 0.7 kg/m2, and 3.4 ± 1.0, respectively, for the control group, and 26.2 ± 4.7 years, 74.6 ± 3.5 kg, 28.2 ± 1.1 kg/m2, and 2.9 ± 1.0, respectively, for the study group. Of the 15 patients in the study group, uterine adhesions were found in 14 patients (93.3%), followed by restricted uterine mobility in 13 patients (86.7%), restricted ovarian/broad ligament mobility (9, 60%), and restricted motility (6, 40%). At baseline, there was no significant difference (p>0.05) in any of the demographics (age, height), or dependent variables (weight, BMI, menstruation domain score) among both groups. Post-study, there was a statistically significant reduction (p=0.000) in weight, and BMI mean values for the diet group (71.2 ± 4.2 kg, and 26.4 ± 0.8 kg/m2, respectively) and the diet + VM group (69.2 ± 3.7 kg; 26.1 ± 0.9 kg/m2, respectively). For the improvement in the menstrual complaints, a significant increase (p<0.05) in the menstruation domain mean score was shown in the diet group (3.9 ± 1.0), and the diet + VM group (4.6 ± 0.5). On comparing both groups post-study, there was a statistically significant improvement (p=0.024) in the severity of menstruation-related problems in favor of the diet + VM group.

The authors concluded that VM yielded greater improvement in menstrual pain, irregularities, and premenstrual symptoms in PCOS patients when added to caloric restriction than utilizing the low-calorie diet alone in treating that condition.

VM involves the manual manipulation by a therapist of internal organs, blood vessels and nerves (the viscera) mostly from outside the body, but sometimes, the therapist also puts his/her fingers into the patient’s vagina. It was developed by the osteopath Jean-Piere Barral. He stated that through his clinical work with thousands of patients, he created this modality based on organ-specific fascial mobilization. And through work in a dissection lab, he was able to experiment with visceral manipulation techniques and see the internal effects of the manipulations. According to its proponents, visceral manipulation is based on the specific placement of soft manual forces looking to encourage the normal mobility, tone, and motion of the viscera and their connective tissues. The idea is that these gentle manipulations may potentially improve the functioning of individual organs, the systems the organs function within, and the structural integrity of the entire body.

I don’t see any reason to believe the concepts of VM are plausible. Thus I find the hypothesis of this trial extremely far-fetched. The results are equally unconvincing. As we have often discussed, the ‘A+B vs B’ design cannot prove a causal relationship between the intervention and the outcome.

The most likely explanation for the findings is that the patients receiving VM experienced or merely reported improvements because the extra attention of mildly invasive treatments produced a powerful placebo effect. To put it bluntly: this is a poor, arguably unethical study where over-enthusiastic researchers reach a conclusion that is not supported by the data.

Traditional, complementary, and alternative medicine (TCAM) – as most of my readers know, I prefer the abbreviation SCAM for so-called alternative medicine – refers to a broad range of health practices and products typically not part of the ‘conventional medicine’ system. Its use is substantial among the general population. TCAM products and therapies may be used in addition to, or instead of, conventional medicine approaches, and some have been associated with adverse reactions or other harms.

The aims of this systematic review were to identify and examine recently published national studies globally on the prevalence of TCAM use in the general population, to review the research methods used in these studies, and to propose best practices for future studies exploring the prevalence of use of TCAM.

MEDLINE, Embase, CINAHL, PsycINFO, and AMED were searched to identify relevant studies published since 2010. Reports describing the prevalence of TCAM use in a national study among the general population were included. The quality of included studies was assessed using a risk of bias tool developed by Hoy et al. Relevant data were extracted and summarised.

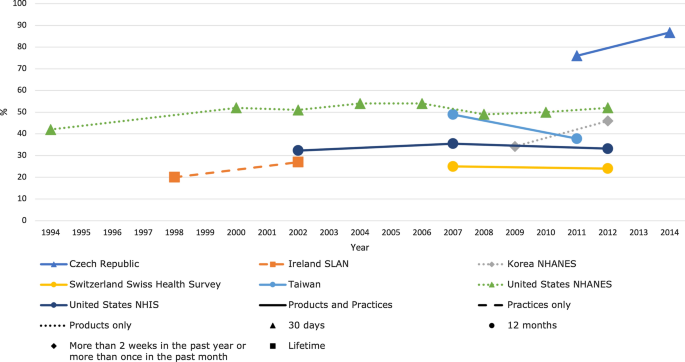

Forty studies from 14 countries, comprising 21 national surveys and one cross-national survey, were included. Studies explored the use of TCAM products (e.g. herbal medicines), TCAM practitioners/therapies, or both. Included studies used different TCAM definitions, prevalence time frames and data collection tools, methods and analyses, thereby limiting comparability across studies. The reported prevalence of use of TCAM (products and/or practitioners/therapies) over the previous 12 months was 24–71.3%.

The authors concluded that the reported prevalence of use of TCAM (products and/or practitioners/therapies) is high, but may underestimate use. Published prevalence data varied considerably, at least in part because studies utilise different data collection tools, methods and operational definitions, limiting cross-study comparisons and study reproducibility. For best practice, comprehensive, detailed data on TCAM exposures are needed, and studies should report an operational definition (including the context of TCAM use, products/practices/therapies included and excluded), publish survey questions and describe the data-coding criteria and analysis approach used.

[Trends in prevalence of TCAM use by country for countries with at least two data collection waves from a nationally representative study. For data collected over several years (e.g. 2007–2009), the prevalence data are plotted at the end of the data collection period (e.g. 2009). Solid and perforated lines between consecutive points are for illustrative purposes only and are not intended to represent linearity. NHANES National Health and Nutrition Examination Survey, NHIS National Health and Interview Survey, SLAN Survey of Lifestyle, Attitudes and Nutrition.]

The review discloses that the prevalence reported across countries ranges from 24 to 71%. This huge variability is not very surprising; some of the many reasons for this phenomenon include:

- different TCAM definitions,

- different prevalence time frames,

- different data collection tools,

- different methods of analyzing the data.

Despite these problems, the information summarized in the review is fascinating in several respects. For me, the most interesting message here is this: the plethora of claims that SCAM use is increasing are not supported by sound evidence.