bias

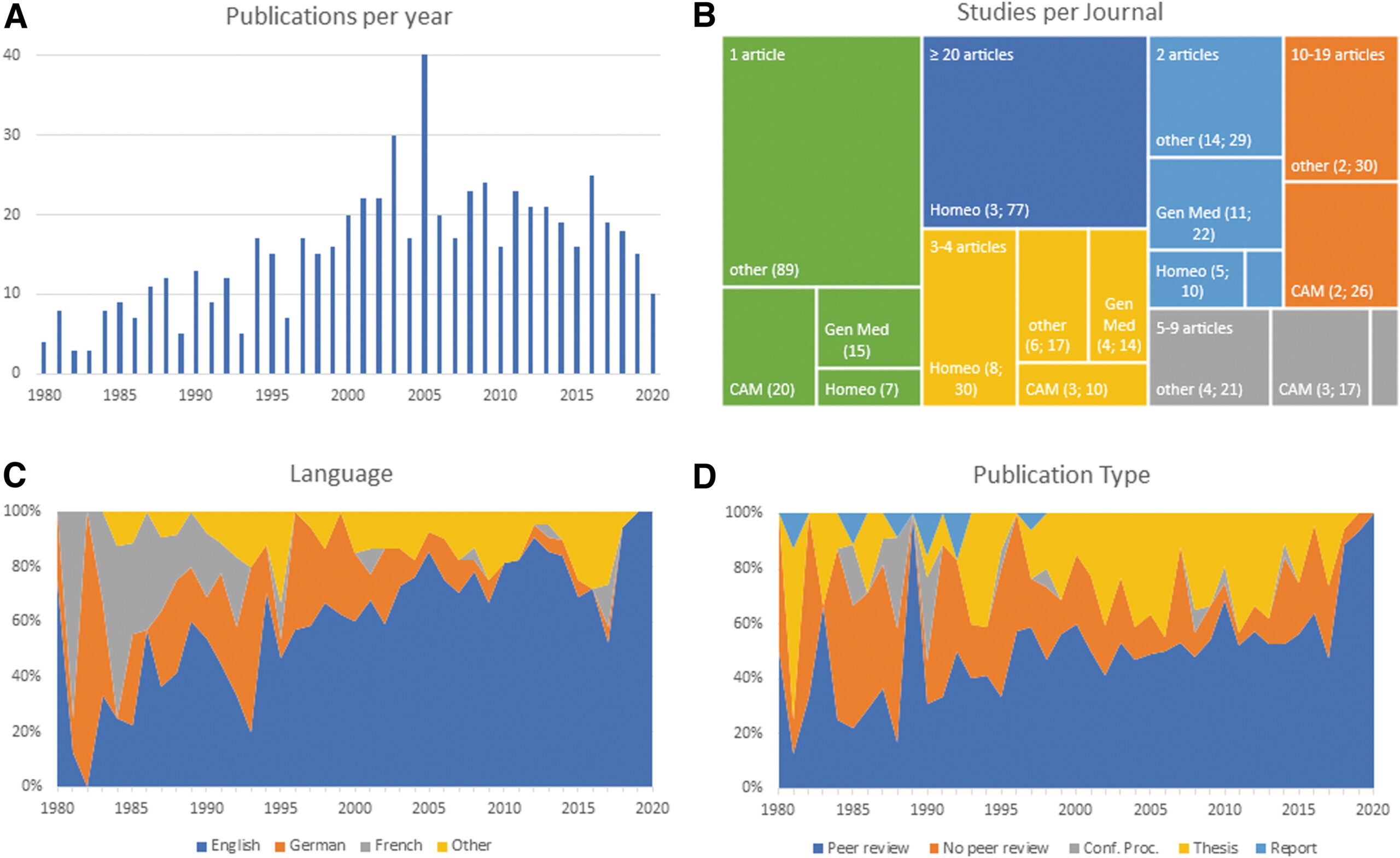

The authors of this article searched 37 online sources, as well as print libraries, for homeopathy (HOM) and related terms in eight languages (1980 to March 2021). They included studies that compared a homeopathic medicine or intervention with a control regarding the therapeutic or preventive outcome of a disease (classified according to International Classification of Diseases-10). Subsequently, the data were extracted independently by two reviewers and analyzed descriptively.

A total of 636 investigations met the inclusion criteria, of which 541 had a therapeutic and 95 a preventive purpose. Seventy-three percent were randomized controlled trials (n = 463), whereas the rest were non-randomized studies (n = 173). The most frequently employed comparator was placebo (n = 400).

The type of homeopathic intervention was classified as:

- multi-constituent or complex (n = 272),

- classical or individualized (n = 176),

- routine or clinical (n = 161),

- isopathic (n = 19),

- various (n = 8).

The potencies ranged from 1X (dilution of -10,000) to 10 M (100–10.000). The included studies explored the effect of HOM in 223 different medical indications. The authors also present the evidence in an online database.

The authors concluded that this bibliography maps the status quo of clinical research in HOM. The data will serve for future targeted reviews, which may focus on the most studied conditions and/or homeopathic medicines, clinical impact, and the risk of bias of the included studies.

There are still skeptics who claim that no evidence exists for homeopathy. This paper proves them wrong. The number of studies may seem sizable to homeopaths, but compared to most other so-called alternative medicines (SCAMs), it is low. And compared to any conventional field of healthcare, it is truly tiny.

There are also those who claim that no rigorous trials of homeopathy with a positive results have ever emerged. This assumption is also erroneous. There are several such studies, but this paper was not aimed at identifying them. Obviously, the more important question is this: what does the totality of the methodologically sound evidence show? It fails to convincingly demonstrate that homeopathy has effects beyond placebo.

The present review was unquestionably a lot of tedious work, but it does not address these latter questions. It was published by known believers in homeopathy and sponsored by the Tiedemann Foundation for Classical Homeopathy, the Homeopathy Foundation of the Association of Homeopathic Doctors (DZVhÄ), both in Germany, and the Foundation of Homeopathy Pierre Schmidt and the Förderverein komplementärmedizinische Forschung, both in Switzerland.

The dataset established by this article will now almost certainly be used for numerous further analyses. I hope that this work will not be left to enthusiasts of homeopathy who have often demonstrated to be blinded by their own biases and are thus no longer taken seriously outside the realm of homeopathy. It would be much more productive, I feel, if independent scientists could tackle this task.

The global interest in dieting has increased, and many people have become obsessed with certain fad diets, assuming they are magic bullets for their problems. A fad diet is a popular dietary pattern known to be a quick fix. This review article explores the current evidence related to the health impacts of (amongst others) detox diets (DDs). DDs are interventional diets specifically designed for toxins elimination, health promotion, and weight management. They involve multiple approaches, including total calorie restriction, dietary modification, or juice fasts, and often the use of additional minerals, vitamins, diuretics, laxatives, or ‘cleansing foods’. Some of the many DDs used today are listed below:

| Diet type | Duration | Foods allowed | Proposed claims |

| Liver cleansing diet | 8 weeks | Plant-based, dairy-free, low-fat, high-fiber, unprocessed foods are allowed. Epsom salt and liver tonics are also consumed. | Improved energy levels and liver function Toxins removal Improved immune response Efficient metabolism of fats and better weight control |

| Lemon detox diet/Master cleanser | 10 days | A liquid-only diet based on purified water, lemon juice, tree syrup, and cayenne pepper. A mild laxative herbal tea and sea salt water is also incorporated. | Toxins removal Shiny hair, glowing skin, and strong nails Weight loss |

| The clean cleanse | 21 days | Breakfast and dinner comprise probiotic capsules, cleanse supplements and cleanse shakes. A solid meal in lunch while avoiding gluten, dairy, corn, soy, pork, beef, refined sugars, some fruits, and vegetables. | Toxins removal Improved energy, digestion, sleep, and mental health Reduction in joint pains, headaches, constipation, and bloating |

| Martha’s vineyard detox diet | 21 days | Herbal teas, vegetable soups and juices, specially formulated tablets, powders and digestive enzymes are on the menu. | Weight loss Toxins removal Improved energy levels |

| Weekend wonder detox | 48 h | Protein-rich meals salads, detox-promoting superfoods, and beverages. Healthy lifestyle, spa treatments, and herbal remedies. | Toxins removal Improved organ function Strengthen body Enhance beauty |

| Fat flush | 2 weeks | Large meals are replaced with dilute cranberries, hot water with lemon, pre-prepared cocktails, supplements, and small meals | Toxins removal Reduced stress Weight loss Improved liver function |

| Blueprint cleanse | 3 days | Consumption of six pre-prepared vegetable and fruit juices is allowed per day. | Toxins removal |

| The Hubbard purification rundown | Several weeks | Niacin doses along with sustained consumption of vitamin-A, B, C, D, and E. Daily exercise with balanced meals. Restriction of alcohol and drugs. Sitting in a sauna for ≤ 5 h each day. | Toxins removal from fat stores Improved memory and intelligence quotient Better blood pressure and cholesterol levels |

Currently, there is no good clinical evidence for the effectiveness of DDs and some evidence to suggest they might do harm. Many of the DD are liquid-based, low-calorie, and nutrient-poor. For example, a part of BluePrint Cleanse, Excavation Cleanse, provides only 19 g protein and 860 kcal/day which is far below the actual requirement. Food and Agriculture Organization (FAO) recommends a minimum of 0.83 g/kg body weight of high-quality protein and 1,680 kcal/day for an adult. DDs may also induce stress, raise cortisol levels, and increase appetite, resulting in binge eating and weight gain.

As no convincing positive evidence exists for DDs and detox products, their use needs to be discouraged by health professionals. Moreover, regulatory review and adequate safety monitoring should be considered.

In this paper, a team of US researchers mined opinions on homeopathy for COVID-19 expressed on Twitter. Their investigation was conducted with a dataset of nearly 60K tweets collected during a seven-month period ending in July 2020. The researchers first built text classifiers (linear and neural models) to mine opinions on homeopathy (positive, negative, neutral) from tweets using a dataset of 2400 hand-labeled tweets obtaining an average macro F-score of 81.5% for the positive and negative classes. The researchers applied this model to identify opinions from the full dataset.

The results show that the number of unique positive tweets is twice that of the number of unique negative tweets; but when including retweets, there are 23% more negative tweets overall indicating that negative tweets are getting more retweets and better traction on Twitter. Using a word shift graph analysis on the Twitter bios of authors of positive and negative tweets, the researchers observed that opinions on homeopathy appear to be correlated with political/religious ideologies of the authors (e.g., liberal vs nationalist, atheist vs Hindu).

The authors drew the following conclusions: to our knowledge, this is the first study to analyze public opinions on homeopathy on any social media platform. Our results surface a tricky landscape for public health agencies as they promote evidence-based therapies and preventative measures for COVID-19.

I am not clear on how to interpret this study. What does it show and why is it important? The authors state this:

… our study cannot lead to meaningful conclusions about homeopathy’s overall online landscape. We also enforced the English language constraint while analyzing the tweets which excludes the views and opinions of all the non-English speaking users, who constitute an overwhelming majority of the world’s population. However, our effort is a first step in the direction of examining the support for alternative medicines especially for homeopathy which has not been studied in the past. At least on Twitter, our findings indicate that negative opinions are gaining more traction in the context of COVID-19.

Opinions expressed on Twitter are influenced by an array of entirely different factors many of which are unpredictable or even unknown. Therefore, I am unsure what to make of these findings. Perhaps some of my readers have an idea?

Guest post by Norbert Aust and Viktor Weisshäupl

Readers of this blog may remember the recent study of Frass et al. about the adjunct homeopathic treatment of patients suffering from non-small cell lung cancer (here). It was published in 2020 by the ‘Oncologist’, a respectable journal, and came to stunning results about to the effectiveness of homeopathy.

In our analysis, however, we found strong indications for duplicity: important study parameters like exclusion criteria or observation time were modified post hoc, and data showed characteristics that occur when unwanted data sets get removed.

We, that is the German Informationsnetzwerk Homöopathie and the Austrian ‘Initiative für wissenschaftliche Medizin’, had informed the Medical University Vienna about our findings – and the research director then asked the Austrian Agency for Scientific Integrity (OeAWI) to review the paper. The analysis took some time and included not only the paper and publicly available information but also the original data. In the end, OeAWI corroborated our findings: The results are not based on sound research but on modified or falsified data.

Here is their conclusion in full:

The committee concludes that there are numerous breaches of scientific integrity in the Study, as reported in the Publication. Several of the results can only be explained by data manipulation or falsification. The Publication is not a fair representation of the Study. The committee cannot for all the findings attribute the wrongdoings and incorrect representation to a single individual. However following our experience it is highly unlikely that the principal investigator and lead author, but also the co-authors were unaware of the discrepancies between the protocols and the Publication, for which they bear responsibility. (original English wording)

Profil, the leading news magazine of Austria reported in its issue of October 24, 2022, pp 58-61 (in German). There the lead author, Prof. M. Frass, a member of Edzard’s alternative medicine hall of fame, was asked for his comments. Here is his concluding statement:

All the allegations are known to us and completely incomprehensible, we can refute all of them. Our work was performed observing all scientific standards. The allegation of breaching scientific integrity is completely unwarranted. To us, it is evident that not all documents were included in the analysis of our study. Therefore we requested insight into the records to learn about the basis for the final statement.

(Die Vorwürfe sind uns alle bekannt und absolut unverständlich, alle können wir entkräften. Unsere Arbeit wurde unter Einhaltung aller wissenschaftlichen Standards durchgeführt. Der Vorhalt von Verstößen gegen die wissenschaftliche Intergrität enbehrt jeder Grundlage. Für uns zeigt sich offenkundig, dass bei der Begutachtung unserer Studie nicht alle Unterlagen miteinbezogen wurden. Aus diesem Grunde haben wir um Akteneinsicht gebeten, um die Grundlagen für das Final Statment kennenzulernen.)

The OeAWI together with the Medical University Vienna asked the ‘Oncologist’ for a retraction of this paper – which has not occurred as yet.

Aging often contributes to a decrease in physical activity. As age advances, a decrease in muscle mass, muscle strength, and flexibility can impair physical function. One obvious way to prevent these developments might be regular physical exercise.

This open-label, randomized trial was intended to evaluate the effects of an integrated yoga module in improving the flexibility, muscle strength, and quality of life (QOL) of older adults. Participants were 96 older adults, aged 60-75 years (64.1 ± 3.95 years). The program was a three-month, yoga-based lifestyle intervention. The participants were randomly allocated to the intervention group (n = 48) or to a waitlisted control group (n = 48). The intervention group underwent three one-hour sessions of yoga weekly, with each session including loosening exercises, asanas, pranayama, and meditation spanning.

At baseline and post-intervention, the following assessments were made:

- spinal flexibility using a sit-and-reach test,

- back and leg strength using a back leg dynamometer,

- handgrip strength (HGS) and endurance (HGE) using a hand-grip dynamometer,

- Older People’s Quality of Life (OPQOL) questionnaire.

Analysis was performed employing Wilcoxon’s Sign Rank tests and Mann-Whitney Tests, using an intention-to-treat approach.

The results show that, compared to the control group, the intervention group experienced a significantly greater increase in spinal flexibility (P < .001), back leg strength (P < .001), HGE (P < .01), and QOL (P < .001) after three months of yoga.

The authors concluded that yoga can be used safely for older adults to improve flexibility, strength, and functional QOL. Larger randomized controlled trials with an active control intervention are warranted.

I agree with the authors that this trial was too small and not properly controlled. I disagree that their study shows yoga to be effective or safe. In fact, the two sentences of the conclusion do not seem to fit together at all.

Is it surprising that doing yoga exercises is better than doing nothing at all?

No!

Is it relevant to demonstrate this fact in an RCT?

No!

If anyone wants to test the value of yoga exercises, they must compare them to conventional exercises. And why don’t they do this? Could it be because they know they would be unlikely to show that yoga is superior?

Here in the UK, we are looking yet again for a new Prime Minister (PM). Did I say ‘we’? That’s not quite true; the Tory party is hunting for one, and it seems a difficult task for the talent-depleted conservatives. Eventually, the geriatric group of Tory members might again have the say. Amazingly, some senior Tories are already suggesting Boris Johnson (BJ).

To me, this demonstrates how common cognitive decline and memory loss seem to be among the elderly. They evidently have already forgotten that, only a few months ago, BJ has already been our PM.

Yes, it is often the short-term memory that suffers first!

It might, therefore, help to remind the Tory membership thus affected that BJ:

- was elected as PM in 2019,

- he then created scandal after scandal,

- he was even found guilty of breaking the law,

- he is still under investigation for misleading the Parliament,

- eventually, in 2022, he was removed from office after mishandling a sexual abuse scandal.

I hope this helps to refresh your memory, Tory members suffering from cognitive decline. Considering this blog is about so-called alternative medicine (SCAM), I should perhaps also offer you some treatments for the often progressive deterioration of mental capacity. Here is a recent paper that might point you in the right direction:

Senile ages of human life is mostly associated with developmental of several neurological complicated conditions including decreased cognition and reasoning, increased memory loss and impaired language performance. Alzheimer’s disease (AD) is the most prevalent neural disorder associated with dementia, consisting of about 70% of dementia reported cases. Failure of currently approved chemical anti-AD therapeutic agents has once again brought up the idea of administering naturally occurring compounds as effective alternative and/or complementary regimens in AD treatment. Polyphenol structured neuroprotecting agents are group of biologically active compounds abundantly found in plants with significant protecting effects against neural injuries and degeneration. As a subclass of this family, Flavonoids are potent anti-oxidant, anti-inflammatory and signalling pathways modulatory agents. Phosphatidylinositol 3-kinase (PI3K)/AKT and mitogen activated protein kinase (MAPK) pathways are both affected by Flavonoids. Regulation of pro-survival transcription factors and induction of specific genes expression in hippocampus are other important anti AD therapeutic activities of Flavonoids. These agents are also capable of inhibiting specific enzymes involved in phosphorylation of tau proteins including β-secretases, cyclin dependent kinase 5 and glycogen synthase. Other significant anti AD effects of Flavonoids include neural rehabilitation and lost cognitive performance recovery. In this review, first we briefly describe the pathophysiology and important pathways involved in pathology of AD and then describe the most important mechanisms through which Flavonoids demonstrate their significant neuroprotective effects in AD therapy.

Sorry, I forgot that this might be a bit too complex for semi-senile Tories. Put simply, this means consuming plenty of:

- berries,

- apples,

- garlic,

- onion,

- green tea,

- beans (beware flatulence in Parliament!)

In addition, I might advise you to stay off the Port, get enough rest, avoid stress of any type, and do plenty of aerobic exercise. And please:

- not too much excitement,

- stimulate your brain (this means avoid reading right-wing papers),

- no major scandals,

- no further deterioration of moral standards,

- no more lies,

- no more broken promises!

In other words, no vote for BJ!!!

Yesterday, L’EXPRESS published an interview with me. It was introduced with these words (my translation):

Professor emeritus at the University of Exeter in the United Kingdom, Edzard Ernst is certainly the best connoisseur of unconventional healing practices. For 25 years, he has been sifting through the scientific evaluation of these so-called “alternative” medicines. With a single goal: to provide an objective view, based on solid evidence, of the reality of the benefits and risks of these therapies. While this former homeopathic doctor initially thought he was bringing them a certain legitimacy, he has become one of their most enlightened critics. It is notable as a result of his work that the British health system, the NHS, gave up covering homeopathy. Since then, he has never ceased to alert us to the abuses and lies associated with these practices. For L’Express, he looks back at the challenges of regulating this vast sector and deciphers the main concepts put forward by “wellness” professionals – holism, detox, prevention, strengthening the immune system, etc.

The interview itself is quite extraordinary, in my view. While UK, US, and German journalists usually are at pains to tone down my often outspoken answers, the French journalists (there were two doing the interview with me) did nothing of the sort. This starts with the title of the piece: “Homeopathy is implausible but energy healing takes the biscuit”.

The overall result is one of the most outspoken interviews of my entire career. Let me offer you a few examples (again my translation):

Why are you so critical of celebrities like Gwyneth Paltrow who promote these wellness methods?

Sadly, we have gone from evidence-based medicine to celebrity-based medicine. A celebrity without any medical background becomes infatuated with a certain method. They popularize this form of treatment, very often making money from it. The best example of this is Prince Charles, sorry Charles III, who spent forty years of his life promoting very strange things under the guise of defending alternative medicine. He even tried to market a “detox” tincture, based on artichoke and dandelion, which was quickly withdrawn from the market.

How to regulate this sector of wellness and alternative medicines? Today, anyone can present himself as a naturopath or yoga teacher…

Each country has its own regulation, or rather its own lack of regulation. In Germany, for instance, we have the “Heilpraktikter”. Anyone can get this paramedical status, you just have to pass an exam showing that you are not a danger to the public. You can retake this exam as often as you want. Even the dumbest will eventually pass. But these practitioners have an incredible amount of freedom, they even may give infusions and injections. So there is a two-tier health care system, with university-trained doctors and these practitioners.

In France, you have non-medical practitioners who are fighting for recognition. Osteopaths are a good example. They are not officially recognized as a health profession. Many schools have popped up to train them, promising a good income to their students, but today there are too many osteopaths compared to the demand of the patients (knowing that nobody really needs an osteopath to begin with…). Naturopaths are in the same situation.

In Great Britain, osteopaths and chiropractors are regulated by statute. There is even a Royal College dedicated to chiropractic. It’s a bit like having a Royal College for hairdressers! It’s stupid, but we have that. We also have professionals like naturopaths, acupuncturists, or herbalists who have an intermediate status. So it’s a very complex area, depending on the state. It is high time to have more uniform regulations in Europe.

But what would adequate regulation look like?

From my point of view, if you really regulate a profession like homeopaths, it means that these professionals may only practice according to the best scientific evidence available. Which, in practice, means that a homeopath cannot practice homeopathy. This is why these practitioners have a schizophrenic attitude toward regulation. On the one hand, they would like to be recognized to gain credibility. But on the other hand, they know very well that a real regulation would mean that they would have to close shop…

What about the side effects of these practices?

If you ask an alternative practitioner about the risks involved, he or she will take exception. The problem is that there is no system in alternative medicine to monitor side effects and risks. However, there have been cases where chiropractors or acupuncturists have killed people. These cases end up in court, but not in the medical literature. The acupuncturists have no problem saying that a hundred deaths due to acupuncture – a figure that can be found in the scientific literature – is negligible compared to the millions of treatments performed every day in this discipline. But this is only the tip of the iceberg. There are many cases that are not published and therefore not included in the data, because there is no real surveillance system for these disciplines.

Do you see a connection between the wellness sector and conspiracy theories? In the US, we saw that Qanon was thriving in the yoga sector, for example…

Several studies have confirmed these links: people who adhere to conspiracy theories also tend to turn to alternative medicine. If you think about it, alternative medicine is itself a conspiracy theory. It is the idea that conventional medicine, in the name of pharmaceutical interests, in particular, wants to suppress certain treatments, which can therefore only exist in an alternative world. But in reality, the pharmaceutical industry is only too eager to take advantage of this craze for alternative products and well-being. Similarly, universities, hospitals, and other health organizations are all too willing to open their doors to these disciplines, despite the lack of evidence of their effectiveness.

It has been reported that a father accused of withholding insulin from his eight-year-old diabetic daughter and relying on the healing power of God has been committed to stand trial for her alleged murder.

Jason Richard Struhs, his wife Kerrie, and 12 others from a fringe religious group have been charged over the death of type 1 diabetic Elizabeth Rose Struhs. Police alleged she had gone days without insulin and then died. The police prosecutor detailed statements from witnesses and experts, including pediatric consultant Dr. Catherine Skellern, who said Elizabeth’s death “would have been painful and was over a prolonged period of days”.

“There is [also] body-worn camera footage at the scene … where Jason Struhs has recounted the events of the week leading up to the death of Elizabeth,” said the prosecutor. “This details the decision that Jason Struhs has made to stop the administration of insulin, and he stated that he knew the consequences, and he stated in that recording that he will ‘probably go to jail like they put Kerrie in jail’.”

During the hearing, Struhs, who appeared from jail by videolink, mainly sat with his head bowed and hands clasped against his forehead as magistrate Clare Kelly described the evidence against him. “It is said that Mr. Struhs, his wife Kerrie Struhs, and their children, including Elizabeth, were members of a religious community… The religious beliefs held by the members of the community include the healing power of God and the shunning of medical intervention in human life.” She also described a statement from Skellern suggesting Elizabeth would have spent her final days suffering from “insatiable thirst, weakness and lethargy, abdominal pain, incontinence, and the onset of impaired levels of consciousness”. The evidence read into court was an attempt by prosecutors to firm up an additional charge of torture. She said a post-mortem found Elizabeth’s cause of death was diabetic ketoacidosis, caused by a lack of insulin. “It is a life-threatening condition, which requires urgent medical treatment,” Kelly said.

During the hearing, Struhs, who appeared from jail by videolink, mainly sat with his head bowed and hands clasped against his forehead as magistrate Clare Kelly described the evidence against him. “It is said that Mr. Struhs, his wife Kerrie Struhs, and their children, including Elizabeth, were members of a religious community… The religious beliefs held by the members of the community include the healing power of God and the shunning of medical intervention in human life.” She also described a statement from Skellern suggesting Elizabeth would have spent her final days suffering from “insatiable thirst, weakness and lethargy, abdominal pain, incontinence, and the onset of impaired levels of consciousness”. The evidence read into court was an attempt by prosecutors to firm up an additional charge of torture. She said a post-mortem found Elizabeth’s cause of death was diabetic ketoacidosis, caused by a lack of insulin. “It is a life-threatening condition, which requires urgent medical treatment,” Kelly said.

___________________________

Cases like these are tragic, all the more so because they might have been preventable with more information and critical thinking. They make me desperately sad, of course, but they also convince me that my work with this blog should continue.

The objective of this study was to evaluate the effect of acupuncture on cognitive task performance in college students.

Sixty students aged 18-25 years were randomly allocated into acupuncture group (AG) (n=30) and control group (CG) (n=30). The AG underwent 20 min of acupuncture/day, while the CG underwent their normal routine for 10 days. Assessments were performed before and after the intervention.

Between-group analysis showed a significant increase in AG’s six-letter cancellation test (SLCT) score compared with CG. Within-group analysis showed a significant increase in the scores of all tests (i.e. SLCT, forward and backward Digit span test [DST]) in AG, while a significant increase in backward DST was observed in CG.

The authors concluded that acupuncture has a beneficial effect on improving the cognitive function of college students.

I am unable to access the full paper [it is behind a paywall]. Thus, I am unable to assess the study in further detail. As I am skeptical about the validity of the effect, I can only assume that it is due to the expectation of the volunteers receiving acupuncture. There was not even an attempt to control for placebo effects!

The over-stated conclusion made me wonder what else the 1st author has published. It turns out he has three more Medline-listed papers to his name all of which are about so-called alternative medicine (SCAM).

The 1st one is an RCT similar to the one above, i.e. without an attempt to control for placebo effects. Its conclusion is equally over-stated: Acupuncture could be considered as an effective treatment modality for the management of primary dysmenorrhea.

The other two papers refer to one case report each. Despite the fact that case reports (as any researcher must know) do not lend themselves to conclusions about the effectiveness of the treatments employed, the authors’ conclusions seem to again over-state the case:

- This suggests that integrative Naturopathy and Yoga therapies could be considered as an adjuvant to conventional medicine in rheumatoid arthritis associated with type-2 diabetes and essential hypertension.

- Though the results are encouraging, further studies are required with larger sample size and advanced inflammatory markers.

What does that tell us?

I don’t know about you, but I would not rely on acupuncture to improve my mental performance.

Advocates of so-called alternative medicine (SCAM) often sound like a broken record to me. They bring up the same ‘arguments’ over and over again, no matter whether they happen to be defending acupuncture, energy healing, homeopathy, or any other form of SCAM. Here are some of the most popular of these generic ‘arguments’:

1. It helped me

The supporters of SCAM regularly cite their own good experiences with their particular form of treatment and think that this is proof enough. However, they forget that any symptomatic improvement they may have felt can be the result of several factors that are unrelated to the SCAM in question. To mention just a few:

- Placebo

- Regression towards the mean

- Natural history of the disease

2. My SCAM is without risk

Since homeopathic remedies, for instance, are highly diluted, it makes sense to assume that they cannot cause side effects. Several other forms of SCAM are equally unlikely to cause adverse effects. So, the notion is seemingly correct. However, this ‘argument’ ignores the fact that it is not the therapy itself that can pose a risk, but the SCAM practitioner. For example, it is well documented – and, on this blog, we have discussed it often – that many of them advise against vaccination, which can undoubtedly cause serious harm.

3. SCAM has stood the test of time

It is true that many SCAMs have survived for hundreds or even thousands of years. It is also true that millions still use it even today. This, according to enthusiasts, is sufficient proof of SCAM’s efficacy. But they forget that many therapies have survived for centuries, only to be proved useless in the end. Just think of bloodletting or mercury preparations from past times.

4 The evidence is not nearly as negative as skeptics pretend

Yes, there are plenty of positive studies on some SCAMs This is not surprising. Firstly, from a purely statistical point of view, if we have, for instance, 1 000 studies of a particular SCAM, it is to be expected that, at the 5% level of statistical significance, about 50 of them will produce a significantly positive result. Secondly, this number becomes considerably larger if we factor in the fact that most of the studies are methodologically poor and were conducted by SCAM enthusiasts with a corresponding bias (see my ALTERNATIVE MEDICINE HALL OF FAME on this blog). However, if we base our judgment on the totality of the most robust studies, the bottom line is almost invariably that there is no overall convincingly positive result.

5. The pharmaceutical industry is suppressing SCAM

SCAM is said to be so amazingly effective that the pharmaceutical industry would simply go bust if this fact became common knowledge. Therefore Big Pharma is using its considerable resources to destroy SCAM. This argument is fallacious because:

- there is no evidence to support it,

- far from opposing SCAM, the pharmaceutical industry is heavily involved in SCAM (for example, by manufacturing homeopathic remedies, dietary supplements, etc.)

6 SCAM could save a lot of money

It is true that SCAMs are on average much cheaper than conventional medicines. However, one must also bear in mind that price alone can never be the decisive factor. We also need to consider other issues such as the risk/benefit balance. And a reduction in healthcare costs can never be achieved by ineffective therapies. Without effectiveness, there can be no cost-effectiveness.

7 Many conventional medicines are also not evidence-based

Sure, there are some treatments in conventional medicine that are not solidly supported by evidence. So why do we insist on solid evidence for SCAM? The answer is simple: in all areas of healthcare, intensive work is going on aimed at filling the gaps and improving the situation. As soon as a significant deficit is identified, studies are initiated to establish a reliable basis. Depending on the results, appropriate measures are eventually taken. In the case of negative findings, the appropriate measure is to exclude treatments from routine healthcare, regardless of whether the treatment in question is conventional or alternative. In other words, this is work in progress. SCAM enthusiasts should ask themselves how many treatments they have discarded so far. The answer, I think, is zero.

8 SCAM cannot be forced into the straitjacket of a clinical trial

This ‘argument’ surprisingly popular. It supposes that SCAM is so individualized, holistic, subtle, etc., that it defies science. The ‘argument’ is false, and SCAM advocates know it, not least because they regularly and enthusiastically cite those scientific papers that seemingly support their pet therapy.

9 SCAM is holistic

This may or may not be true, but the claim of holism is not a monopoly of SCAM. All good medicine is holistic, and in order to care for our patients holistically, we certainly do not need SCAM.

1o SCAM complements conventional medicine

This argument might be true: SCAM is often used as an adjunct to conventional treatments. Yet, there is no good reason why a complementary treatment should not be shown to be worth the effort and expense to add it to another therapy. If, for instance, you pay for an upgrade on a flight, you also want to make sure that it is worth the extra expenditure.

11 In Switzerland it works, too

That’s right, in Switzerland, a small range of SCAMs was included in basic health care by referendum. However, it has been reported that the consequences of this decision are far from positive. It brought no discernible benefit and only caused very considerable costs.

I am sure there are many more such ‘arguments’. Feel free to post your favorites!

My point here is this:

the ‘arguments’ used in defense of SCAM are not truly arguments; they are fallacies, misunderstandings, and sometimes even outright lies.